K8s Cluster Autoscaler

Chapter 3- Introduction: Kubernetes Autoscaling

- Chapter 1: Vertical Pod Autoscaler (VPA)

- Chapter 2: Kubernetes HPA

- Chapter 3: K8s Cluster Autoscaler

- Chapter 4: K8s ResourceQuota Object

- Chapter 5: Kubernetes Taints & Tolerations

- Chapter 6: Guide to K8s Workloads

- Chapter 7: Kubernetes Service Load Balancer

- Chapter 8: Kubernetes Namespace

- Chapter 9: Kubernetes Affinity

- Chapter 10: Kubernetes Node Capacity

- Chapter 11: Kubernetes Service Discovery

- Chapter 12: Kubernetes Labels

Kubernetes Cluster Autoscaler is one of the most popular automation tools for Kubernetes hardware capacity management. It is supported by the major cloud platforms and can streamline the Kubernetes (K8s) cluster management process.

To help you get started with Kubernetes Cluster Autoscaler, in this article, we’ll explain how it handles capacity management by adding and removing worker nodes automatically, its requirements, and provide examples of how to use it in practice.

Cluster AutoScaling Overview

As new pods are deployed and replica counts for existing pods increase, cluster worker nodes can use up all their allocated resources. As a result, no more pods can be scheduled on existing workers. Some pods can go into a pending state, waiting for CPU and memory and possibly creating an outage. As Kubernetes admin, you can manually solve this problem by adding more worker nodes to the cluster to enable scheduling of additional pods.

The problem is this manual process is time-consuming and scales poorly. Fortunately, Kubernetes Cluster Autoscaler can solve this problem by automating capacity management. Specifically, Cluster Autoscaler automates the process of adding and removing worker nodes from a K8s cluster.

Most cloud providers support Cluster Autoscaling, but it’s not supported for on-prem self-hosted K8s environments. Cluster Autoscaling is a “cloud-only” feature because on-prem deployments lack the APIs for automatic virtual machine creation and deletion required for the autoscaling process.

By default, Cluster Autoscaler is installed on most K8s cloud installations. If Cluster Autoscaler isn’t installed in your cloud environment but is supported, you can manually install it.

Cluster AutoScaling Requirements and Supported Platforms

Support for Cluster Autoscaler is available on these Kubernetes platforms:

- Google Kubernetes Engine (GKE)

- Azure Kubernetes (AKS)

- Elastic Kubernetes Service (EKS)

as well as several other less popular K8s cloud platforms.

Each cloud provider has its own implementation of Cluster Autoscaler with different limitations.

You can enable Cluster Autoscaler during cluster creation using a platform-specific GUI or CLI method. For example, on GKE the command below enables Cluster Autoscaler on a multi-zone cluster with a one-node per zone minimum and four-node per zone maximum:

gcloud container clusters create example-cluster

--num-nodes 2

--zone us-central1-a

--node-locations us-central1-a,us-central1-b,us-central1-f

--enable-autoscaling --min-nodes 1 --max-nodes 4How Cluster Autoscaling Works

Kubernetes scheduler dynamically places pods on worker nodes using a best-effort QoS strategy. For Cluster Autoscaler to work as expected and applications to get the underlying host resources they need, resource requests and limits must be defined on pods. Without resource requests and limits, Cluster Autoscaler can’t make accurate decisions.

Cluster AutoScaler periodically checks the status of nodes and pods and takes action based on node utilization or pod scheduling status. When Cluster Autoscaler detects pending pods on the cluster, it will add more nodes until pending pods are scheduled or the cluster reaches the max node limit. Cluster Autoscaler will remove extra nodes if node utilization is low and pods can move to other nodes.

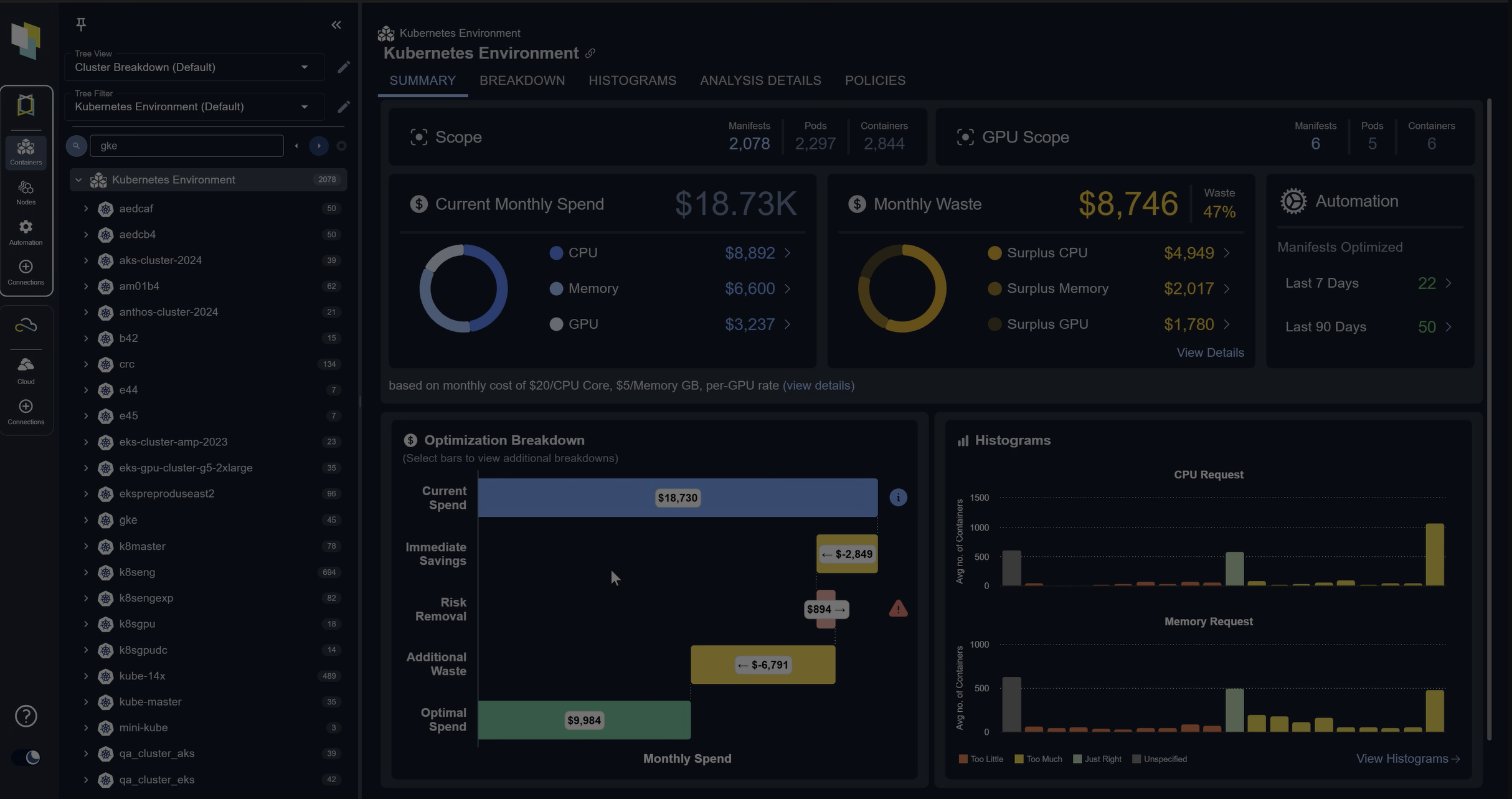

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialHow to Use Cluster AutoScaler

Now that we understand how Cluster Autoscaler works, we can get started using it in practice. This section will walk through an example application deployment on Google Cloud Platform using Google Kubernetes Engine (GKE) with Cluster Autoscaler enabled with resource requests and limits defined.

We’ll scale the application by increasing the number of replicas until the autoscaler detects the pending pods. Then we’ll see the autoscaler events with a new node added. Finally, we will scale down the replicas for the autoscaler to remove the extra nodes.

To begin, create a demo cluster with 3 worker nodes

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ gcloud container clusters create scaling-demo --num-nodes=3

NAME LOCATION MASTER_VERSION MASTER_IP MACHINE_TYPE NODE_VERSION NUM_NODES STATUS

scaling-demo us-central1-a 1.20.8-gke.900 35.225.137.158 e2-medium 1.20.8-gke.900 3 RUNNINGEnable autoscaling on the cluster

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ gcloud beta container clusters update scaling-demo --enable-autoscaling --min-nodes 1 --max-nodes 5

Updating scaling-demo...done.Updated [https://container.googleapis.com/v1beta1/projects/qwiklabs-gcp-03-a94f05d7b8a0/zones/us-central1-a/clusters/scaling-demo].Get the number of initial nodes created

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

gke-scaling-demo-default-pool-b182e404-5l2v Ready <none> 6m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-87gq Ready <none> 6m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-kwfc Ready <none> 6m v1.20.8-gke.900Create the example deployment application with resource requests and limits defined so that Kubernetes Scheduler can allocate pods on nodes with required capacity and Cluster Autoscaler can allocate more nodes when needed.

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ cat deployment.yaml

apiVersion: v1

kind: Service

metadata:

name: application-cpu

labels:

app: application-cpu

spec:

type: ClusterIP

selector:

app: application-cpu

ports:

- protocol: TCP

name: http

port: 80

targetPort: 80

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: application-cpu

labels:

app: application-cpu

spec:

selector:

matchLabels:

app: application-cpu

replicas: 1

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

template:

metadata:

labels:

app: application-cpu

spec:

containers:

- name: application-cpu

image: aimvector/application-cpu:v1.0.2

imagePullPolicy: Always

ports:

- containerPort: 80

resources:

requests:

memory: "50Mi"

cpu: "500m"

limits:

memory: "500Mi"

cpu: "2000m"

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl create -f deployment.yaml

service/application-cpu created

deployment.apps/application-cpu createdSpend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialThe deployment is up and it has one pod up and running now

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get pods

NAME READY STATUS RESTARTS AGE

application-cpu-7879778795-8t8bn 1/1 Running 0 9sLet’s scale by adding one more replica pod by setting number of replicas to two

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl scale deploy/application-cpu --replicas 2

deployment.apps/application-cpu scaled

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get pods

NAME READY STATUS RESTARTS AGE

application-cpu-7879778795-8t8bn 1/1 Running 0 2m29s

application-cpu-7879778795-rzxc7 0/1 Pending 0 5sThe new pod is pending due to insufficient resources. The Cluster Autoscaler will now take action. It detects the event and starts creating and adding a new worker node to the cluster.

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get events

LAST SEEN TYPE REASON OBJECT MESSAGE

3m24s Normal Scheduled pod/application-cpu-7879778795-8t8bn Successfully assigned default/application-cpu-7879778795-8t8bn to gke-scaling-demo-default-pool-b182e404-87gq

3m23s Normal Pulling pod/application-cpu-7879778795-8t8bn Pulling image "aimvector/application-cpu:v1.0.2"

3m20s Normal Pulled pod/application-cpu-7879778795-8t8bn Successfully pulled image "aimvector/application-cpu:v1.0.2" in 3.035424763s

3m20s Normal Created pod/application-cpu-7879778795-8t8bn Created container application-cpu

3m20s Normal Started pod/application-cpu-7879778795-8t8bn Started container application-cpu

60s Warning FailedScheduling pod/application-cpu-7879778795-rzxc7 0/3 nodes are available: 3 Insufficient cpu.

56s Normal TriggeredScaleUp pod/application-cpu-7879778795-rzxc7 pod triggered scale-up: [{https://www.googleapis.com/compute/v1/projects/qwiklabs-gcp-03-a94f05d7b8a0/zones/us-central1-a/instanceGroups/gke-scaling-demo-default-pool-b182e404-grp 3->4 (max: 5)}]

2s Warning FailedScheduling pod/application-cpu-7879778795-rzxc7 0/4 nodes are available: 1 node(s) had taint {node.kubernetes.io/not-ready: }, that the pod didn't tolerate, 3 Insufficient cpu.

3m24s Normal SuccessfulCreate replicaset/application-cpu-7879778795 Created pod: application-cpu-7879778795-8t8bn

60s Normal SuccessfulCreate replicaset/application-cpu-7879778795 Created pod: application-cpu-7879778795-rzxc7

3m24s Normal ScalingReplicaSet deployment/application-cpu Scaled up replica set application-cpu-7879778795 to 1

60s Normal ScalingReplicaSet deployment/application-cpu Scaled up replica set application-cpu-7879778795 to 2

5m50s Normal RegisteredNode node/gke-scaling-demo-default-pool-b182e404-5l2v Node gke-scaling-demo-default-pool-b182e404-5l2v event: Registered Node gke-scaling-demo-default-pool-b182e404-5l2v in Controller

5m50s Normal RegisteredNode node/gke-scaling-demo-default-pool-b182e404-87gq Node gke-scaling-demo-default-pool-b182e404-87gq event: Registered Node gke-scaling-demo-default-pool-b182e404-87gq in Controller

13s Normal Starting node/gke-scaling-demo-default-pool-b182e404-ccft Starting kubelet.

13s Warning InvalidDiskCapacity node/gke-scaling-demo-default-pool-b182e404-ccft invalid capacity 0 on image filesystem

12s Normal NodeHasSufficientMemory node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeHasSufficientMemory

12s Normal NodeHasNoDiskPressure node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeHasNoDiskPressure

12s Normal NodeHasSufficientPID node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeHasSufficientPID

12s Normal NodeAllocatableEnforced node/gke-scaling-demo-default-pool-b182e404-ccft Updated Node Allocatable limit across pods

10s Normal RegisteredNode node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft event: Registered Node gke-scaling-demo-default-pool-b182e404-ccft in Controller

9s Normal Starting node/gke-scaling-demo-default-pool-b182e404-ccft Starting kube-proxy.

6s Warning ContainerdStart node/gke-scaling-demo-default-pool-b182e404-ccft Starting containerd container runtime...

6s Warning DockerStart node/gke-scaling-demo-default-pool-b182e404-ccft Starting Docker Application Container Engine...

6s Warning KubeletStart node/gke-scaling-demo-default-pool-b182e404-ccft Started Kubernetes kubelet.

6s Warning NodeSysctlChange node/gke-scaling-demo-default-pool-b182e404-ccft {"unmanaged": {"net.netfilter.nf_conntrack_buckets": "32768"}}

1s Normal NodeReady node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeReadyLet’s check if a new worker was added by Cluster Autoscaler

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

gke-scaling-demo-default-pool-b182e404-5l2v Ready <none> 13m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-87gq Ready <none> 13m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-ccft Ready <none> 83s v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-kwfc Ready <none> 13m v1.20.8-gke.900The pod is now allocated and running

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get pods

NAME READY STATUS RESTARTS AGE

application-cpu-7879778795-8t8bn 1/1 Running 0 4m45s

application-cpu-7879778795-rzxc7 1/1 Running 0 2m21sSpend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialLet’s scale up by adding one more pod

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl scale deploy/application-cpu --replicas 3

deployment.apps/application-cpu scaledAgain, a new Pod goes to pending state due to insufficient resources

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get pods

NAME READY STATUS RESTARTS AGE

application-cpu-7879778795-56l6d 0/1 Pending 0 16s

application-cpu-7879778795-8t8bn 1/1 Running 0 5m22s

application-cpu-7879778795-rzxc7 1/1 Running 0 2m58sWe can see the events related to new node creation

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get events

LAST SEEN TYPE REASON OBJECT MESSAGE

33s Warning FailedScheduling pod/application-cpu-7879778795-56l6d 0/4 nodes are available: 4 Insufficient cpu.

29s Normal TriggeredScaleUp pod/application-cpu-7879778795-56l6d pod triggered scale-up: [{https://www.googleapis.com/compute/v1/projects/qwiklabs-gcp-03-a94f05d7b8a0/zones/us-central1-a/instanceGroups/gke-scaling-demo-default-pool-b182e404-grp 4->5 (max: 5)}]

5m39s Normal Scheduled pod/application-cpu-7879778795-8t8bn Successfully assigned default/application-cpu-7879778795-8t8bn to gke-scaling-demo-default-pool-b182e404-87gq

5m38s Normal Pulling pod/application-cpu-7879778795-8t8bn Pulling image "aimvector/application-cpu:v1.0.2"

5m35s Normal Pulled pod/application-cpu-7879778795-8t8bn Successfully pulled image "aimvector/application-cpu:v1.0.2" in 3.035424763s

5m35s Normal Created pod/application-cpu-7879778795-8t8bn Created container application-cpu

5m35s Normal Started pod/application-cpu-7879778795-8t8bn Started container application-cpu

3m15s Warning FailedScheduling pod/application-cpu-7879778795-rzxc7 0/3 nodes are available: 3 Insufficient cpu.

3m11s Normal TriggeredScaleUp pod/application-cpu-7879778795-rzxc7 pod triggered scale-up: [{https://www.googleapis.com/compute/v1/projects/qwiklabs-gcp-03-a94f05d7b8a0/zones/us-central1-a/instanceGroups/gke-scaling-demo-default-pool-b182e404-grp 3->4 (max: 5)}]

2m17s Warning FailedScheduling pod/application-cpu-7879778795-rzxc7 0/4 nodes are available: 1 node(s) had taint {node.kubernetes.io/not-ready: }, that the pod didn't tolerate, 3 Insufficient cpu.

2m7s Normal Scheduled pod/application-cpu-7879778795-rzxc7 Successfully assigned default/application-cpu-7879778795-rzxc7 to gke-scaling-demo-default-pool-b182e404-ccft

2m6s Normal Pulling pod/application-cpu-7879778795-rzxc7 Pulling image "aimvector/application-cpu:v1.0.2"

2m5s Normal Pulled pod/application-cpu-7879778795-rzxc7 Successfully pulled image "aimvector/application-cpu:v1.0.2" in 1.493155878s

2m5s Normal Created pod/application-cpu-7879778795-rzxc7 Created container application-cpu

2m5s Normal Started pod/application-cpu-7879778795-rzxc7 Started container application-cpu

5m39s Normal SuccessfulCreate replicaset/application-cpu-7879778795 Created pod: application-cpu-7879778795-8t8bn

3m15s Normal SuccessfulCreate replicaset/application-cpu-7879778795 Created pod: application-cpu-7879778795-rzxc7

33s Normal SuccessfulCreate replicaset/application-cpu-7879778795 Created pod: application-cpu-7879778795-56l6d

5m39s Normal ScalingReplicaSet deployment/application-cpu Scaled up replica set application-cpu-7879778795 to 1

3m15s Normal ScalingReplicaSet deployment/application-cpu Scaled up replica set application-cpu-7879778795 to 2

33s Normal ScalingReplicaSet deployment/application-cpu Scaled up replica set application-cpu-7879778795 to 3

2m28s Normal Starting node/gke-scaling-demo-default-pool-b182e404-ccft Starting kubelet.

2m28s Warning InvalidDiskCapacity node/gke-scaling-demo-default-pool-b182e404-ccft invalid capacity 0 on image filesystem

2m27s Normal NodeHasSufficientMemory node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeHasSufficientMemory

2m27s Normal NodeHasNoDiskPressure node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeHasNoDiskPressure

2m27s Normal NodeHasSufficientPID node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeHasSufficientPID

2m27s Normal NodeAllocatableEnforced node/gke-scaling-demo-default-pool-b182e404-ccft Updated Node Allocatable limit across pods

2m25s Normal RegisteredNode node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft event: Registered Node gke-scaling-demo-default-pool-b182e404-ccft in Controller

2m24s Normal Starting node/gke-scaling-demo-default-pool-b182e404-ccft Starting kube-proxy.

2m21s Warning ContainerdStart node/gke-scaling-demo-default-pool-b182e404-ccft Starting containerd container runtime...

2m21s Warning DockerStart node/gke-scaling-demo-default-pool-b182e404-ccft Starting Docker Application Container Engine...

2m21s Warning KubeletStart node/gke-scaling-demo-default-pool-b182e404-ccft Started Kubernetes kubelet.

2m21s Warning NodeSysctlChange node/gke-scaling-demo-default-pool-b182e404-ccft {"unmanaged": {"net.netfilter.nf_conntrack_buckets": "32768"}}

2m16s Normal NodeReady node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeReadyVerify that Cluster Autoscaler creates and adds new worker node in response to pending pod event

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

gke-scaling-demo-default-pool-b182e404-5l2v Ready <none> 14m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-7sg8 Ready <none> 2s v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-87gq Ready <none> 14m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-ccft Ready <none> 2m43s v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-kwfc Ready <none> 14m v1.20.8-gke.900We can see that a new pod is allocated and scheduled successfully

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get pods

NAME READY STATUS RESTARTS AGE

application-cpu-7879778795-56l6d 1/1 Running 0 82s

application-cpu-7879778795-8t8bn 1/1 Running 0 6m28s

application-cpu-7879778795-rzxc7 1/1 Running 0 4m4sNow let’s scale the pods back down to one. Scaling down will remove extra pods and trigger Cluster Autoscaler to remove the extra nodes created earlier.

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl scale deploy/application-cpu --replicas 1

deployment.apps/application-cpu scaled

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get pods

NAME READY STATUS RESTARTS AGE

application-cpu-7879778795-8t8bn 1/1 Running 0 7m41sGet the number of nodes , they are still not scaled down yet , as the autoscaler takes time to detect unused nodes

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

gke-scaling-demo-default-pool-b182e404-5l2v Ready <none> 25m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-7sg8 Ready <none> 10m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-87gq Ready <none> 25m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-ccft Ready <none> 13m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-kwfc Ready <none> 25m v1.20.8-gke.900Let’s wait for a few minutes (5–15 minutes) and see the scale down events related to node removal

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get events

LAST SEEN TYPE REASON OBJECT MESSAGE

9m31s Normal ScaleDown node/gke-scaling-demo-default-pool-b182e404-7sg8 node removed by cluster autoscaler

8m53s Normal NodeNotReady node/gke-scaling-demo-default-pool-b182e404-7sg8 Node gke-scaling-demo-default-pool-b182e404-7sg8 status is now: NodeNotReady

7m44s Normal Deleting node gke-scaling-demo-default-pool-b182e404-7sg8 because it does not exist in the cloud provider node/gke-scaling-demo-default-pool-b182e404-7sg8 Node gke-scaling-demo-default-pool-b182e404-7sg8 event: DeletingNode

7m42s Normal RemovingNode node/gke-scaling-demo-default-pool-b182e404-7sg8 Node gke-scaling-demo-default-pool-b182e404-7sg8 event: Removing Node gke-scaling-demo-default-pool-b182e404-7sg8 from Controller

9m30s Normal ScaleDown node/gke-scaling-demo-default-pool-b182e404-ccft node removed by cluster autoscaler

8m42s Normal NodeNotReady node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft status is now: NodeNotReady

7m38s Normal Deleting node gke-scaling-demo-default-pool-b182e404-ccft because it does not exist in the cloud provider node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft event: DeletingNode

7m37s Normal RemovingNode node/gke-scaling-demo-default-pool-b182e404-ccft Node gke-scaling-demo-default-pool-b182e404-ccft event: Removing Node gke-scaling-demo-default-pool-b182e404-ccft from ControllerCheck the number of nodes, latest update

student_02_c444e24e3915@cloudshell:~ (qwiklabs-gcp-03-a94f05d7b8a0)$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

gke-scaling-demo-default-pool-b182e404-5l2v Ready <none> 34m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-87gq Ready <none> 34m v1.20.8-gke.900

gke-scaling-demo-default-pool-b182e404-kwfc Ready <none> 34m v1.20.8-gke.900Now we see that the number of nodes is scaled back down to the initial number of nodes in the cluster.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialCluster Autoscaler Limitations

Cluster Autoscaler is a helpful tool but has limitations. This section summarizes its main shortcomings and points to commercial tools that can help you overcome them.

- Cluster Autoscaler is not supported on on-premise environments until an autoscaler is implemented for on-premise deployments.

- Scaling up is not immediate. Therefore, a pod will be in a pending state for a few minutes until a new worker is added.

- Some cluster workers may have other dependencies, such as local volumes bindings from other pods. As a result, a node may be a candidate for removal but can’t be removed by Cluster Autoscaler.

- Cluster Autoscaler works based on resource requests, not actual usage. This fact can lead to mis-allocating nodes if resource requests and limits are not properly calculated and set. This issue is critical because you can waste resources in your cluster under the false impression that autoscaling is addressing them. Supplemental tools can help analyze the effective efficiency throughout the cluster (container, pod, namespace, node) to avoid pockets of waste due to misconfiguration.

- Cluster Autoscaler adds additional nodes but administrators are responsible for defining the right size for each node. The same commercial tools that help with optimizing the node size while also identifying the cluster’s wasted capacity due to unused resource requests

These limitations will impact most K8s deployments, especially at scale. You can learn more about container infrastructure optimization, designed to enable intelligent scaling for complex environments.

FAQs

What is Kubernetes Cluster Autoscaler?

Kubernetes Cluster Autoscaler automatically adds or removes worker nodes in a cluster based on pod scheduling needs and node utilization. It prevents pods from being stuck in a pending state when resources are insufficient.

How does Kubernetes Cluster Autoscaler work?

It monitors resource requests and pending pods. If pods can’t be scheduled due to lack of capacity, it provisions new nodes. If nodes are underutilized and workloads can be moved, it removes extra nodes.

Which platforms support Kubernetes Cluster Autoscaler?

Cluster Autoscaler is supported on major cloud platforms including Google Kubernetes Engine (GKE), Amazon EKS, and Azure AKS. It is not supported in self-hosted on-prem environments without additional tooling.

What are the limitations of Kubernetes Cluster Autoscaler?

Limitations include slow scaling (pods may stay pending for minutes), reliance on resource requests instead of actual usage, inability to handle some node dependencies, and lack of support for on-prem clusters by default.

What are best practices for using Kubernetes Cluster Autoscaler?

Best practices include defining accurate resource requests and limits for pods, right-sizing nodes, testing scaling thresholds, and complementing autoscaling with monitoring or optimization tools to avoid inefficiencies.

Try us

Experience automated K8s, GPU & AI workload resource optimization in action.