EKS vs GKE vs AKS: An In-Depth Comparison

Chapter 3- Introduction: 25 Essential Kubernetes Tools for Container Management

- Chapter 1: Managing Kubernetes Resource Limits

- Chapter 2: Use Terraform to Provision AWS EKS. Learn Why & How.

- Chapter 3: EKS vs GKE vs AKS: An In-Depth Comparison

- Chapter 4: The Guide to Helm & EKS

- Chapter 5: Kubernetes kOps

- Chapter 6: Kubernetes Best Practices

- Chapter 7: Kustomize Tutorial

- Chapter 8: How to Deploy a K8s Cluster Using Kubespray

- Chapter 9: Guide To Kube-State-Metrics

- Chapter 10: Kubeadm Tutorial

Comparing Three Hosted Kubernetes Platforms

Google’s original brainchild, Kubernetes, has become indispensable across the tech landscape. According to a 2020 Cloud Native Computing Foundation (CNCF) survey, 78% of container users use Kubernetes. That explosion of popularity has birthed over 109 external tools to date. Keeping up with all of the latest tools is not only a challenge, it’s also incredibly time consuming. That’s why, In this article, we’ll help you answer an important question for those just getting started with hosted kubernetes: which hosting provider you should use, and why?

If you already have some context for EKS, GKE and AKS from Amazon, Google, and Azure cloud services, feel free to jump to Which Hosting Provider Is Right for Me? to review a series of comparison tables.

The Case for Vendor Hosting

Provided you can foot the bill, external hosting can jumpstart your Kubernetes deployment. Depending on the type of hosted service you choose, your team won’t have to worry about the following:

- Manually provisioning the control plane components such as etcd and kube-controller-manager

- Implementing role-based access control to secure your separate environments

- Manually adding nodes to expand your cluster

- Manually configuring the pods that support your containers

- Instrumenting monitoring and logging for your cluster

While Kubernetes is fairly intuitive and powerful, its functionality has an intangible cost: a learning curve. You’ll need to spend many hours learning Kubernetes and its mechanisms.

Hosting providers remove some of this burden from your shoulders, as autoscaling, security updates, infrastructure monitoring, and maintenance come standard.

Now let’s evaluate the three leading Kubernetes vendors.

EKS vs GKE vs AKS – Which Hosting Provider Is Right for Me?

Deploying Kubernetes via hosting providers can save plenty of time and energy. Your decision will depend on a number of factors, such as familiarity, technology stack preferences, cost, and infrastructure priorities. We’ve put together the following tables to help you decide what’s best for your cloud computing needs.

Following the tabular comparisons, we provide a separate review for each hosting provider.

| Metrics | Kubernetes | Amazon EKS | Microsoft AKS | Google GKE |

|---|---|---|---|---|

| Supported Versions | 1.2, 1.19, 1.18 | 1.18 (default), 1.17, 1.16, 1.15 | 1.20 (preview), 1.19, 1.18, 1.17 | 1.17, 1.16, 1.15 (default), 1.14 |

| Number of supported minor version releases | 3 | ≥3 + 1 deprecated | 3 | 4 |

| Original Release Date | July 2015 ( 1.0) | June 2018 | June 2018 | August 2015 |

| CNCF Kubernetes Conformance | – | Yes | Yes | Yes |

| Latest CNCF-certified version | – | 1.18 | 1.19 | 1.18 |

| Control-plane upgrade process | – | User initiated; user must also manually update system services running on nodes (e.g., kube-proxy, coredns, AWS VPC CNI) | User initiated; system components update with cluster upgrades | Upgrades automatically by default; can be user-initiated |

| Node upgrade process | – | Unmanaged node groups: user-initiated and managed.

Managed node groups: user-initiated; EKS drains and replaces nodes |

Automatically upgraded or user-initiated, AKS drains and replaces nodes | Upgrades automatically by default during cluster maintenance window; can be user-initiated. GKE drains and replaces nodes |

| Node OS | Linux: Any OS supported by a compatible container runtime, Windows: Windows Server 2019 (Kubernetes v1.14+) | Linux: Amazon Linux 2 (default), Ubuntu (partner AMI), Bottlerocket. Windows: Windows Server 2019 | Linux: Ubuntu, Windows: Windows Server 2019 | Linux: Container-Optimized OS (COS) (default), Ubuntu, Windows: Windows Server 2019, Windows Server version 1909 |

| Container runtime | Linux: Docker, Containerd, Cri-o, rktlet, any runtime that implements the Kubernetes CRI (Container Runtime Interface) Windows: Docker EE-basic 18.09 | Docker (default), containerd (through Bottlerocket) | Docker (default), containerd | Docker (default), containerd, gVisor |

| Control plane high availability options | Supported | Control plane is deployed across multiple Availability Zones (default) | Control plane components will be spread between the number of zones defined by the admin | Zonal Clusters: Single Control Plane, Regional Clusters: Three Kubernetes control planes quorum. |

| Control plane SLA | – | 99.95% (default) | 99.95% (SLA backed), 99.9% (non-SLA backed) | Zonal clusters: 99.95%, Regional clusters: 99.95% |

| SLA financially-backed | – | Yes | Yes | Yes |

| Pricing | – | $0.10/hour (USD) per cluster + standard costs of EC2 instances and other resources | Pay-as-you-go: Standard costs of node VMs and other resources | $0.10/hour (USD) per cluster + standard costs of GCE machines and other resources |

| GPU support | Supported with device plugins | Yes (NVIDIA); user must install device plugin in cluster | Yes (NVIDIA); user must install device plugin in cluster | Yes (NVIDIA); user must install device plugin in cluster. Compute Engine A2 VMs are also available |

| Control plane: log collection | – | Optional (off by default); logs are sent to AWS CloudWatch | Optional (off by default); logs are sent to Azure Monitor | Optional (off by default); logs are sent to Stackdriver |

| Container performance metrics | – | Optional (off by default); metrics are sent to AWS CloudWatch Container Insights | Optional (off by default); metrics are sent to Azure Monitor | Optional (off by default); metrics are sent to Stackdriver |

| Node health monitoring | – | No Kubernetes-aware support; failing nodes are replaced by ASG | Supports node auto-repair and node status monitoring. Use autoscaling rules to shift workloads | Supports node auto-repair by default |

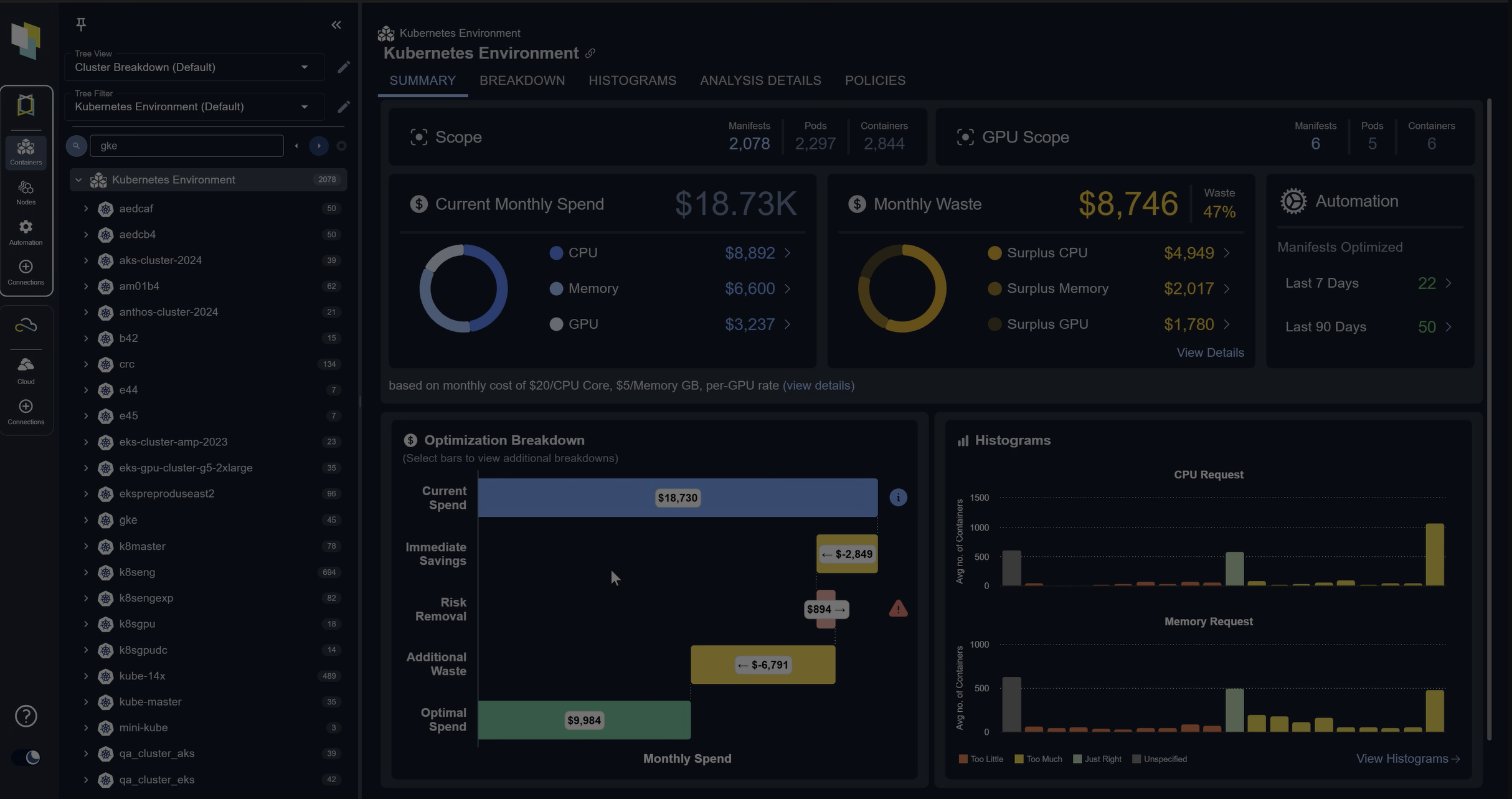

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day Trial| Metrics | Kubernetes | Amazon EKS | Microsoft AKS | Google GKE |

|---|---|---|---|---|

| Image repository service | – | ECR (Elastic Container Registry) | ACR (Azure Container Registry) | AR (Artifact Registry) |

| Supported formats | – | Docker Image Manifest V2, Schema 1, Docker Image Manifest V2, Schema 2, Open Container Initiative (OCI) Specifications, Helm Charts | Docker Image Manifest V2, Schema 1, Docker Image Manifest V2, Schema 2, Open Container Initiative (OCI) Specifications, Helm Charts | Docker Image Manifest V2, Schema 1, Docker Image Manifest V2, Schema 2, Open Container Initiative (OCI) Specifications, Maven and Gradle, npm |

| Access security | – | Permissions managed by AWS IAM; permissions can be applied at repository level. Network endpoint is public by default; Network endpoint can be limited to specific VPCs | Permissions managed by Azure RBAC; can be applied at repository level. Network endpoint is public by default; Network endpoint can be limited to specific Vnets | Permissions managed by GCP IAM; permissions can only be applied at registry level. Network endpoint is public by default; network access for GCR registries can be limited to specific VPCs with service perimeters |

| Supports image signing | – | No | Yes | Yes, with Binary Authorization and Voucher |

| Supports immutable image tags | – | Yes | Yes, and it supports the locking of images and repositories | No |

| Image scanning service | – | Yes, free service: OS packages only | Yes, paid service: uses Qualys scanner in sandbox to check for vulnerabilities | Yes, paid Service: OS packages only |

| Registry SLA | – | 99.9%; financially-backed | 99.9%; financially-backed | None |

| Geo-Redundancy | – | Yes, configurable | Yes, configurable as part of the premium service | Yes: by default |

| Metrics | Kubernetes | Amazon EKS | Microsoft AKS | Google GKE |

|---|---|---|---|---|

| Network plugin/CNI | kubenet (default), external CNIs can added | Amazon VPC Container Network Interface (CNI) | Azure CNI or kubenet | kubenet (default), Calico (added for Network Policies) |

| Kubernetes RBAC | Supported since 2017 | Required; immutable after cluster creation | Enabled by default; immutable after cluster creation | Enabled by default; mutable after cluster creation |

| Kubernetes Network Policy | Not enabled by default, CNI implementing; Network Policy API can be installed manually | Not enabled by default; Calico can be installed manually | Not enabled by default; must be enabled at cluster creation time | Not enabled by default; can be enabled at any time |

| PodSecurityPolicy support | PSP admission controller needs to be enabled as kube-apiserver flag. Set to be deprecated in version 1.21 | PSP controller installed in all clusters with permissive default policy (v1.13+) | PSP can be installed at any time. Will be deprecated on May 31st 2021 for Azure Policy | PSP can be installed at any time; currently in Beta |

| Private or public IP address for cluster Kubernetes API | – | Public by default; optional public, hybrid, or private setup | Public by default private-only available where private link is supported | Public by default; optional public, hybrid, or private setup |

| Private or Public IP addresses for nodes | – | Unmanaged node, groups: Optional, Managed node groups: Optional | Public by default; private can be enabled as well | Public by default; private can be enabled as well |

| Pod-to-pod traffic encryption supported by provider | Requires a CNI implementation with functionality | No by default | No by default | Yes, with Istio implemented |

| Firewall for cluster Kubernetes API | – | CIDR allow list option | CIDR allow list option | CIDR allow list option |

| Read-only root filesystem on node | Supported | Pod security policy required | Azure policy required | COS: default. Alternative: pod security policy required |

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day Trial| Metrics | Kubernetes | Amazon EKS | Microsoft AKS | Google GKE |

|---|---|---|---|---|

| Max clusters | – | 100/region* | 1000 | 50/zone + 50 regional clusters |

| Max nodes/cluster | 5000 | 30 (Managed node groups) * 100 (Max nodes per group) = 3000* | 1000 (Virtual Machine Scale Sets), 100 (VM Availability Sets) | 15,000 nodes (v1.18 required),5000 nodes (v1.17 or lower) |

| Max nodes/pool, group | – | Managed node groups: 100* | 100 | 1000 |

| Max pools,groups/cluster | – | Managed node groups: 30* | 100 nodes/pool | Not documented |

| Max pods/node | 100 (configurable) | Linux: Varies by node instance type: ((# of IPs per Elastic Network Interface – 1) * Number of ENIs) + 2, Windows: Number of IPs per ENI – 1 | 250 (Azure CNI, max, configured at cluster creation time), 110 (kubenet network), 30 (Azure CNI, default) | 110 (default) |

| Metrics | Kubernetes | Amazon EKS | Microsoft AKS | Google GKE |

|---|---|---|---|---|

| Bare Metal Nodes | – | No | Yes | No |

| Max Nodes per Cluster | – | 1,000 | 1,000 | 5,000 |

| High Availability Cluster | – | No | Yes for control plan, manual across AZ for workers | Yes via regional cluster, master and worker are replicated |

| Auto Scaling | – | Yes via cluster autoscaler | Yes via cluster autoscaler | Yes via cluster autoscaler |

| Vertical Pod Autoscaler | – | No | Yes | Yes |

| Node Pools | – | Yes | Yes | Yes |

| GPU Nodes | – | Yes | Yes | Yes |

| On-prem | – | Available via Azure ARC (beta) | No | GKE on-prem via Anthos GKE |

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialGoogle Kubernetes Engine (GKE)

Google first released Kubernetes before handing the Cloud Native Computing Foundation (CNCF) the reins in 2015. GKE is also the oldest service on our list. It’s little surprise that Google thus provides such robust hosting functionality—buoyed by its focus on scaling and templating.

GKE Compatibility

Google Kubernetes Engine supports the following K8s versions:

- 1.16

- 1.17 (stable channel)

- 1.18 (regular and rapid channels)

- 1.19 (rapid channel)

Google regularly releases version updates, with three January releases (2021-R1, R2, R3) having hit servers by the time of writing. GKE allows teams to be conservative, on the bleeding edge, or somewhere in between—based on risk tolerance and stability requirements.

Over a dozen total variants of each minor version are available, making GKE unparalleled in terms of flexibility. Google recommends that you enable auto-upgrade for clusters and nodes. However, you’re free to manually select a Kubernetes version for your clusters.

Similarly, OS support is as follows:

- Container-Optimized OS (COS). Default, Google-maintained Linux variant that supports Docker containers

- Ubuntu

- Windows Server 2019

- Windows Server 1909

Docker is the default container runtime for Google Kubernetes Engine. However, both containerd and gVisor (Google’s proprietary, Linux sandboxed architecture) are supported. This makes GKE the most inclusive service for container runtimes, over options like Amazon Elastic Container Service (EKS) and Azure Kubernetes Service (AKS).

GKE Feature Highlights

Even simple applications can be tricky to deploy in Kubernetes. GKE circumvents this traditional challenge via templating—providing “single-click clusters” and open-source applications via Google Cloud Marketplace. These provide necessary licensing and portability out of the box, for easier ramp up.

Because these pre-made applications are Google-built, they’re thoroughly tested and trustworthy for commercial use. They may be stateful (requiring storage for client data retention), or serverless (via Google Cloud Run). Additionally, an integrated CI/CD pipeline allows for swift application development while following best practices. These processes promote testing and security galvanization.

Let’s talk autoscaling. Not every vendor does this well, but GKE’s approach is both horizontal and vertical. Horizontal entails pod scaling via CPU utilization. If pods are straining available compute resources, GKE will kill those pods (or replace them) to reclaim performance. How many containers run per pod (or group of pods) will influence this behavior. This is because pods don’t self-heal, or survive, when resources become scarce.

Vertically, GKE monitors pod CPU and memory usage while dynamically making resource requests. This prevents applications from seizing or causing problems. Node pools and clusters amongst them are scaled as workloads change.

Lastly, Google Kubernetes Engine offers a variety of zonal clusters and regional clusters. A Kubernetes control plane oversees both single-zone and multi-zone clusters—though the latter employs a replica running in a single zone, alongside nodes spread across zones.

Regional clusters are uniquely beneficial. They maintain superior uptime (99.95% vs. 99.5%) over zonal clusters, and leverage multiple control pane replicas. This is better for dynamic node pools. However, they consume more resources.

GKE Monitoring and Logging

Container performance logging is optional and opt-in. GKE routes all container metrics to Google Cloud operations suite—Google’s equivalent to Amazon CloudWatch. Formerly dubbed Stackdriver, the service also collects traces in addition to providing real-time metrics visualizations. Threshold-based alerts and other notifications are available.

Node auto-repair is enabled by default. This sets Google’s offering apart from Azure. Control plane logging is optional and off by default.

GKE Pricing & Support

GKE costs $0.10 per hour, per cluster—with a maximum of 50 clusters per zone and per region. Accounts with a service-level agreement (SLA) benefit from an uptick in uptime. One zonal cluster per account comes free. Management fees are nil until your account exceeds 720 zonal cluster hours per month.

All GKE accounts benefit from Google’s dedicated Site Reliability Engineers. These professionals provide support and advice for maximizing application performance, or tackling runtime errors.

Azure Kubernetes Service (AKS)

Microsoft released its open-source AKS in 2018, and the service has since steadily risen in popularity. Like GKE, Azure Kubernetes Service aims to help teams launch applications quicker via baked in CI/CD support.

AKS Compatibility

Azure Kubernetes Service supports the following K8s versions:

- 1.17

- 1.18

- 1.19

Microsoft expects to deprecate Kubernetes v1.16 in January 2021. Additionally, Kubernetes 1.20 enters into AKS preview this month—graduating to General Availability around March 2021.

Azure Kubernetes Service only supports two subversions of each minor version at any given time. That means 1.17a, 1.17b, and so on for each Kubernetes version listed, according to Microsoft’s documentation. The current version of AKS is v0.59.0 at the time of writing.

AKS also supports the following OSes:

- Ubuntu 18.04 (cluster K8s versions 1.18.8+)

- Ubuntu 16.04 (cluster K8s versions pre-dating 1.18)

- Windows Server 2019

Docker remains the default container runtime for AKS. Additionally, you may choose containerd instead, should it better support your usage. The latter works as either a low-level runtime (via cri-containerd and fulfilling the OCI specification) or high-level runtime using Kubernetes Runtime Class.

Containerd users can completely forego Docker and dockershim alike. This benefit isn’t exclusive to AKS containerd users. However, AKS is the only vendor offering a container runtime dichotomy.

AKS Feature Highlights

AKS champions rapid application development. It firstly accomplishes this via first-party integrations. AKS interfaces with Visual Studio Code Kubernetes tools, which facilitates (in part) the following:

- Exploratory tree views of clusters, including views of workloads, services, pods, and nodes

- Access to Helm repos, chart creation, scaffolding, and snippets

- Building and running of Dockerfile-based containers

- Running of commands and shells within application pods

- Easy bootstrapping of applications—including bugging and deployment

- Live resource monitoring via cluster explorer

This is available for free from the Visual Studio Marketplace. Installation is easy, provided dependencies like kubectl and docker or buildah exist on your machine.

Additionally, Azure Monitor integration provides access to continual telemetry data—including performance, health, and resource allocation. Azure DevOps works to supercharge the CI/CD process. Canary deployments are rapid, tracing provides failure detection, and debugging is relatively simple.

Cluster monitoring is based on Prometheus by default. This relies on queries, dimensional data, and visualizations. The latter leverages a built-in browser, console template language, or Grafana integrations which add meaning beyond simple numbers.

Elastic provisioning lets you scale multiple container instances without server management. Like GKE, AKS includes event-driven autoscaling based on workload. AKS will either add or subtract containers as needs fluctuate. Using Kubernetes Event-Driven Autoscaling (KEDA), you can define how these changes occur in response to resource demands. Traffic spikes may also trigger provisioning.

Azure calls this “elastic bursting.” The concept is that workloads should only consume the resources needed to stay afloat. AKS can responsively summon additional compute. Accordingly, uptime sits at 99.9% or 99.95% (with an SLA agreement). Control plane components are split across clusters in specified zones.

Finally, security is a major focus area. Users inherently have privileges, which define accessibility to resources and functionality. Integration with Azure Active Directory means admins can configure identity and access management across their infrastructure. That fine-grained control prevents unauthorized access. It also allows customized control from one cluster to the next.

Azure Private Link also works to limit AKS control plane access to Azure networks. Communication pathways are protected. Interaction between nodes will be safeguarded from prying eyes, thanks to enterprise-grade encryption at rest. Admins may manage keys and disk images.

AKS Pricing & Support

AKS employs a pay-as-you-go pricing model for nodes. You can even choose capacity reservations for one year or three years. Spot resources are also available. It does cost $0.10 per cluster, per hour, when adding an Uptime SLA. The service also provides a cost calculator to determine costs based on your usage. Luckily, there are no charges for cluster management with AKS.

On the support side, Microsoft offers Azure Advisor to optimize both savings and performance. This resource provides guidance based on your needs. Users can submit support requests via their account, and AKS forums provide supplemental help.

Amazon Elastic Kubernetes Service (EKS)

EKS is the new kid on the block, launching shortly after AKS back in 2018. The story here is the same; Amazon EKS aims to bolster scalability, security, and visibility into your Kubernetes deployment.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day Trial

EKS Compatibility

Amazon EKS supports the following K8s versions:

- 1.18.9

- 1.17.12

- 1.16.15

- 1.15.12

Amazon warns those updating a cluster from 1.16 to 1.17. The update will fail if any AWS Fargate pods are running a kubelet version below 1.16. You must recycle these pods until they’re running v1.16. Subsequent update attempts will then be successful. Additionally, Amazon recommends that you choose the latest EKS Kubernetes version that your clusters may support (unless a specific version is necessary). Support for Kubernetes 1.19 is currently slated for February 2021.

Meanwhile, EKS OS support is somewhat more proprietary:

- Amazon Linux 2 (default)

- Ubuntu Amazon Machine Image (AMI)

- Windows Server 2019

Amazon requires that you use their distributions on EKS. Otherwise, Windows Server support is on par with competing offerings. Elastic Kubernetes Service also mandates that you use Docker for container runtimes—thus more limited when compared to Google and Azure.

EKS Feature Highlights

Orchestration is unique on EKS, thanks in part to eksctl—Amazon’s open-source command line tool. It’s unique to EKS clusters and streamlines the creation of your clusters. Users can install eksctl using three methods:

- Homebrew, for macOS

- Curl, for Linux

- Chocolatey, for Windows

Eksctl is most commonly used to create or delete clusters and node groups. However, it’s also useful for scaling, updating, configuring, listing, installing, and writing config files. All commands submit a CloudFormation query with all required resources. This abstraction is user friendly. You can also drain nodes via this interface, to keep applications running harmoniously.

Node management is also a standout feature. EKS supports both Windows worker nodes and Windows container scheduling. You can manage applications with the same cluster—since EKS permits tandem operation of Windows worker nodes and Linux nodes. Upgrades are user initiated.

Another functionality worth highlighting is the Cluster Autoscaler which automates the adding and removal of nodes in a cluster based on pod behavior. This feature leverages both the Kubernetes Cluster Autoscaler which is a core component of the control panel, as well as the AWS Auto Scaling group (ASG).

Interestingly, EKS supports Arm-based EC2 instances. These are powered by AWS Graviton2 processors. The primary benefit to this arrangement, Amazon claims, is exceptional performance per dollar. Clusters supported by x86 architectures don’t quite measure up. Note that these instances are only available in certain regions, so not all customers may leverage them.

Networking and connecting applications to your clusters are also easy. AWS App Mesh and its controller let you create new meshed services, determine load balancing, and add encryption. App Mesh integrates with AWS Cloud Map—which permits custom resource naming. This not only simplifies management, but it allows web services to locate your updated application components more quickly.

EKS’ control planes are distributed across numerous availability zones. EKS uses three zones at any given time to boost uptime—which sits at 99.95% on average. No SLA is needed to secure this rate.

You may use Fargate for your deployment. Fargate is designed to outsource the provisioning and management of cluster nodes. You simply provision containers based on your sizing requirements and let AWS do the provisioning of nodes in the cluster to accommodate your requirements.. Similarly to standard EKS, Fargate also supports RBAC, IAM, and a virtual private cloud. This feature set brings EKS more in line with options like AKS—which integrates with Active Directory.

EKS Pricing & Support

EC2 instances via EKS cost $0.10 per cluster, per hour. Fargate resources are conversely pay-as-you-go, and the AWS Pricing Calculator will help estimate your overall investment. Want to know how EKS financially compares to your existing deployment? Swing by Amazon’s Economics Resource Center to learn more.

You may contact Amazon for assistance via phone or email. You may also file a formal support ticket for EKS issues, or consult the Knowledge Center for casual problem solving.

FAQs

What is Hosted Kubernetes?

Hosted Kubernetes is a managed service where cloud providers like AWS, Azure, or Google handle the Kubernetes control plane, security patches, and scaling. This reduces operational overhead for teams adopting Kubernetes.

What are the main benefits of Hosted Kubernetes?

Hosted Kubernetes provides built-in scalability, automatic upgrades, integrated monitoring, and managed security updates. It saves engineering time compared to self-managed clusters and allows teams to focus on workloads.

How do AWS EKS, Azure AKS, and Google GKE differ as Hosted Kubernetes platforms?

EKS integrates tightly with AWS services, making it ideal for hybrid workloads, though it offers less flexibility in container runtimes. AKS is well-suited for enterprises using Azure, thanks to its integration with Microsoft developer tools and Azure Monitor. GKE stands out with advanced features like node auto-repair and vertical pod autoscaling, often leading in Kubernetes innovation.

What are the costs of Hosted Kubernetes services?

Both EKS and GKE charge around $0.10 per cluster per hour, in addition to compute and storage. AKS management is free, but uptime SLAs add cost. All providers follow a pay-as-you-go model for nodes and resources.

When should I choose Hosted Kubernetes instead of self-managed clusters?

Choose Hosted Kubernetes if you want reduced maintenance, enterprise support, and easier scaling. It’s best for teams new to Kubernetes or those that prioritize speed to market over deep control of the cluster internals.

Try us

Experience automated K8s, GPU & AI workload resource optimization in action.