Kubernetes kOps

Chapter 5- Introduction: 25 Essential Kubernetes Tools for Container Management

- Chapter 1: Managing Kubernetes Resource Limits

- Chapter 2: Use Terraform to Provision AWS EKS. Learn Why & How.

- Chapter 3: EKS vs GKE vs AKS: An In-Depth Comparison

- Chapter 4: The Guide to Helm & EKS

- Chapter 5: Kubernetes kOps

- Chapter 6: Kubernetes Best Practices

- Chapter 7: Kustomize Tutorial

- Chapter 8: How to Deploy a K8s Cluster Using Kubespray

- Chapter 9: Guide To Kube-State-Metrics

- Chapter 10: Kubeadm Tutorial

Kubernetes provides excellent container orchestration, but setting up a Kubernetes cluster from scratch can be painful. One solution is to use Kubernetes Operations, or kOps.

What is kOps?

kOps, also known as Kubernetes operations, is an open-source project which helps you create, destroy, upgrade, and maintain a highly available, production-grade Kubernetes cluster. Depending on the requirement, kOps can also provision cloud infrastructure.

kOps is mostly used in deploying AWS and GCE Kubernetes clusters. But officially, the tool only supports AWS. Support for other cloud providers (such as DigitalOcean, GCP, and OpenStack) are in the beta stage at the time of writing this article. The developers of this open source project describe it as “kubectl for clusters” on their Github homepage which may be confusing for some but helpful for others. Let’s start by reviewing its benefits.

Benefits of kOps

- Automates the provisioning of AWS and GCE Kubernetes clusters

- Deploys highly available Kubernetes masters

- Supports rolling cluster updates

- Autocompletion of command in the command line

- Generates Terraform and CloudFormation configurations

- Manages cluster add-ons

- Supports state-sync model for dry-runs and automatic idempotency

- Creates instance groups to support heterogeneous clusters

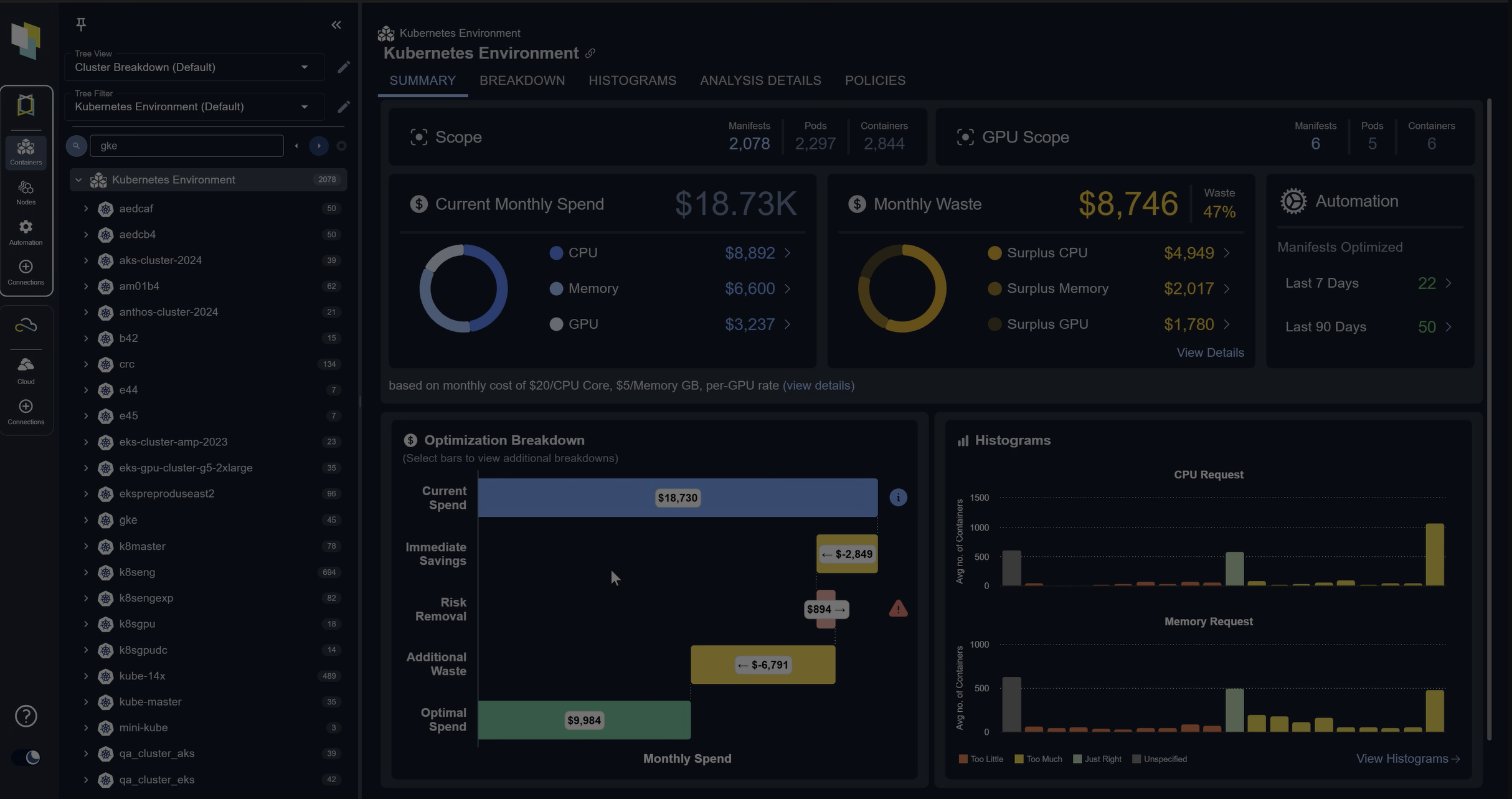

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialHow to Install kOps

Use the following steps to install kOps on your Linux machine. In this example, we are using Ubuntu 18.04.

- Download kOps from the releases package.

ubuntu@ip-161-32-38-140:~$ curl -Lo kOps https://github.com/kubernetes/kOps/releases/download/$(curl -s https://api.github.com/repos/kubernetes/kOps/releases/latest | grep tag_name | cut -d '"' -f 4)/kOps-linux-amd64 Saving to: ‘kOps-linux-amd64’ 100%[=========================================================================================================================================================================>] 75,924,000 9.34MB/s in 9.2s 2021-02-15 14:29:21 (7.84 MB/s) - ‘kOps-linux-amd64’ saved [75924000/75924000] - Give executable permission to the downloaded kOps file and move it to /usr/local/bin/

ubuntu@ip-161-32-38-140:~$ sudo chmod +x kOps-linux-amd64 ubuntu@ip-161-32-38-140:~$ sudo mv kOps-linux-amd64 /usr/local/bin/kOps - Run the kOps command to verify the installation.

ubuntu@ip-161-32-38-140:~$ kOps kOps is Kubernetes ops. kOps is the easiest way to get a production grade Kubernetes cluster up and running. We like to think of it as kubectl for clusters. kOps helps you create, destroy, upgrade and maintain production-grade, highly available, Kubernetes clusters from the command line. AWS (Amazon Web Services) is currently officially supported, with GCE and VMware vSphere in alpha support. Usage: kOps [command] Available Commands: completion Output shell completion code for the given shell (bash or zsh). create Create a resource by command line, filename or stdin. delete Delete clusters,instancegroups, or secrets. describe Describe a resource. edit Edit clusters and other resources. export Export configuration. get Get one or many resources. import Import a cluster. replace Replace cluster resources. rolling-update Rolling update a cluster. toolbox Misc infrequently used commands. update Update a cluster. upgrade Upgrade a kubernetes cluster. validate Validate a kOps cluster. version Print the kOps version information. Flags: --alsologtostderr log to standard error as well as files --config string config file (default is $HOME/.kOps.yaml) -h, --help help for kOps --log_backtrace_at traceLocation when logging hits line file:N, emit a stack trace (default :0) --log_dir string If non-empty, write log files in this directory --logtostderr log to standard error instead of files (default false) --name string Name of cluster --state string Location of state storage --stderrthreshold severity logs at or above this threshold go to stderr (default 2) -v, --v Level log level for V logs --vmodule moduleSpec comma-separated list of pattern=N settings for file-filtered logging Use "kOps [command] --help" for more information about a command. - Check the kOps version.

ubuntu@ip-161-32-38-140:~$ kOps version Version 1.8.0 (git-5099bc5)

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialThe Basics of kOps Commands

Below are the most widely-used kOps commands you should know.

kOps create

This kOps command is used to register a cluster:

kOps create cluster <clustername>

There are many other parameters which you can add in addition to the default command, like zone, region, instance type, and number of nodes.

kOps update

This kOps command is used to update the cluster with the specified cluster specification:

kOps update cluster --name <clustername>

We recommend running this command in preview mode when you need to triangulate your desired output. Run the command with the –yes flag to apply your changes to the cluster.

kOps get

This kOps command is used to list all the clusters.

kOps get clusters

kOps delete

This command deletes a specified cluster from the registry along with all of its cloud resources.

kOps delete cluster --name <clustername>

You can also run this command in preview mode, just like update.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialkOps rolling-update

This kOps command is used to update a Kubernetes cluster to match the cloud and kOps specifications.

kOps rolling-update cluster --name <clustername>

You can run this command also in the preview mode just like update.

kOps validate

This command validates if the created cluster is up or not. If the nodes and pods are still in the pending state, the validate command returns that the cluster is not healthy yet.

kOps validate cluster --wait <specified_time>

This command waits and validates the cluster for 10 minutes.

Now that you know the basics of kOps commands, let’s look at how to create a Kubernetes cluster on AWS using kOps.

How to Set Up Kubernetes on AWS using kOps

- Install kubectl.

ubuntu@ip-161-32-38-140:~$ curl -LO https://storage.googleapis.com/kubernetes-release/release/$(curl -s https://storage.googleapis.com/kubernetes-release/release/stable.txt)/bin/linux/amd64/kubectl % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 38.3M 100 38.3M 0 0 6862k 0 0:00:05 0:00:05 --:--:-- 8127k - Give the executable permission to the downloaded file and move it to /usr/local/bin/

ubuntu@ip-161-32-38-140:~$ chmod +x ./kubectl ubuntu@ip-161-32-38-140:~$ sudo mv ./kubectl /usr/local/bin/kubectl - Create an S3 Bucket using the AWS CLI where kOps will save all the cluster’s state information. Use LocationConstraint to avoid any error with the region.

ubuntu@ip-161-32-38-140:~$ aws s3api create-bucket --bucket my-kOps-bucket-1132 --region us-west-2 --create-bucket-configuration LocationConstraint=us-west-2 { "Location": "http://my-kOps-bucket-1132.s3.amazonaws.com/" } - List the aws s3 bucket to see the bucket you have created.

ubuntu@ip-161-32-38-140:~$ aws s3 ls 2021-02-16 17:10:24 my-kOps-bucket-1132 - Enable the version for the s3 bucket.

ubuntu@ip-161-32-38-140:~$ aws s3api put-bucket-versioning --bucket my-kOps-bucket-1132 --versioning-configuration Status=Enabled - Generate ssh key for which will be used by kOps for cluster login and password generation.

ubuntu@ip-161-32-38-140:~$ ssh-keygen Generating public/private rsa key pair. Enter file in which to save the key (/home/ubuntu/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/ubuntu/.ssh/id_rsa. Your public key has been saved in /home/ubuntu/.ssh/id_rsa.pub. The key fingerprint is: SHA256:fH4JCBXMNRqzk1hmoK+cXmwSFaeBsuGBA5IWMkNuvq0 ubuntu@ip-161-32-38-140 The key's randomart image is: +---[RSA 2048]----+ |O=. .++Xoo | |B++ .. @o* . | |.= =. = = | |o o o o o | | . . . S o | | o. = o . . | | . .= + . o | | .. + . | | E . | +----[SHA256]-----+ - Expose the cluster name and s3 bucket as environment variables. This is only applicable for the current session. The suffix ‘.k8s.local’ is used because we are not using any pre-configured DNS.

ubuntu@ip-161-32-38-140:~$ export kOps_CLUSTER_NAME=kOpsdemo1.k8s.local ubuntu@ip-161-32-38-140:~$ export kOps_STATE_STORE=s3://my-kOps-bucket-1132 - Use the kOps create command to create the cluster. Below are the parameters we used:

- –cloud

- tells which cloud provider I am using

- –zones

- is the zone where the cluster instance will get deployed

- –node-count

- is the number of nodes I want in the Kubernetes cluster I am deploying

- –node-size / –master-size

- are the ec2 instance types, I am using the micro instances

- –name

- is the name of the cluster

ubuntu@ip-161-32-38-140:~$ kOps create cluster --cloud=aws --zones=eu-central-1a --node-count=1 --node-size=t2.micro --master-size=t2.micro --name=${kOps_CLUSTER_NAME} I0216 17:14:49.225238 3547 subnets.go:180] Assigned CIDR 172.20.32.0/19 to subnet eu-central-1a I0216 17:14:50.068088 3547 create_cluster.go:717] Using SSH public key: /home/ubuntu/.ssh/id_rsa.pub Previewing changes that will be made: I0216 17:14:51.332590 3547 apply_cluster.go:465] Gossip DNS: skipping DNS validation I0216 17:14:51.392712 3547 executor.go:111] Tasks: 0 done / 83 total; 42 can run W0216 17:14:51.792113 3547 vfs_castore.go:604] CA private key was not found I0216 17:14:51.938057 3547 executor.go:111] Tasks: 42 done / 83 total; 17 can run I0216 17:14:52.436407 3547 executor.go:111] Tasks: 59 done / 83 total; 18 can run I0216 17:14:52.822395 3547 executor.go:111] Tasks: 77 done / 83 total; 2 can run I0216 17:14:52.823088 3547 executor.go:111] Tasks: 79 done / 83 total; 2 can run I0216 17:14:53.406919 3547 executor.go:111] Tasks: 81 done / 83 total; 2 can run I0216 17:14:53.842148 3547 executor.go:111] Tasks: 83 done / 83 total; 0 can run LaunchTemplate/master-eu-central-1a.masters.kOpsdemo1.k8s.local AssociatePublicIP true HTTPPutResponseHopLimit 1 HTTPTokens optional IAMInstanceProfile name:masters.kOpsdemo1.k8s.local id:masters.kOpsdemo1.k8s.local ImageID 099720109477/ubuntu/images/hvm-ssd/ubuntu-focal-20.04-amd64-server-20210119.1 InstanceType t2.micro RootVolumeSize 64 RootVolumeType gp2 RootVolumeEncryption false RootVolumeKmsKey SSHKey name:kubernetes.kOpsdemo1.k8s.local-3e:19:92:ca:dd:64:d5:cf:ff:ed:3a:92:0f:40:d4:e8 id:kubernetes.kOpsdemo1.k8s.local-3e:19:92:ca:dd:64:d5:cf:ff:ed:3a:92:0f:40:d4:e8 SecurityGroups [name:masters.kOpsdemo1.k8s.local] SpotPrice Tags {k8s.io/cluster-autoscaler/node-template/label/kubernetes.io/role: master, k8s.io/cluster-autoscaler/node-template/label/kOps.k8s.io/instancegroup: master-eu-central-1a, k8s.io/role/master: 1, kOps.k8s.io/instancegroup: master-eu-central-1a, Name: master-eu-central-1a.masters.kOpsdemo1.k8s.local, KubernetesCluster: kOpsdemo1.k8s.local, kubernetes.io/cluster/kOpsdemo1.k8s.local: owned, k8s.io/cluster-autoscaler/node-template/label/node-role.kubernetes.io/master: } Subnet/eu-central-1a.kOpsdemo1.k8s.local ShortName eu-central-1a VPC name:kOpsdemo1.k8s.local AvailabilityZone eu-central-1a CIDR 172.20.32.0/19 Shared false Tags {KubernetesCluster: kOpsdemo1.k8s.local, kubernetes.io/cluster/kOpsdemo1.k8s.local: owned, SubnetType: Public, kubernetes.io/role/elb: 1, Name: eu-central-1a.kOpsdemo1.k8s.local} VPC/kOpsdemo1.k8s.local CIDR 172.20.0.0/16 EnableDNSHostnames true EnableDNSSupport true Shared false Tags {kubernetes.io/cluster/kOpsdemo1.k8s.local: owned, Name: kOpsdemo1.k8s.local, KubernetesCluster: kOpsdemo1.k8s.local} VPCDHCPOptionsAssociation/kOpsdemo1.k8s.local VPC name:kOpsdemo1.k8s.local DHCPOptions name:kOpsdemo1.k8s.local Must specify --yes to apply changes Cluster configuration has been created. Suggestions: * list clusters with: kOps get cluster * edit this cluster with: kOps edit cluster kOpsdemo1.k8s.local * edit your node instance group: kOps edit ig --name=kOpsdemo1.k8s.local nodes-eu-central-1a * edit your master instance group: kOps edit ig --name=kOpsdemo1.k8s.local master-eu-central-1a Finally configure your cluster with: kOps update cluster --name kOpsdemo1.k8s.local --yes –admin - Verify that the cluster was created.

ubuntu@ip-161-32-38-140:~$ kOps get cluster NAME CLOUD ZONES kOpsdemo1.k8s.local aws eu-central-1a - Apply the specified cluster specifications to the cluster.

ubuntu@ip-161-32-38-140:~$ kOps update cluster --name kOpsdemo1.k8s.local --yes --admin I0216 17:15:24.800767 3556 apply_cluster.go:465] Gossip DNS: skipping DNS validation I0216 17:15:25.919282 3556 executor.go:111] Tasks: 0 done / 83 total; 42 can run W0216 17:15:26.343336 3556 vfs_castore.go:604] CA private key was not found I0216 17:15:26.421652 3556 keypair.go:195] Issuing new certificate: "etcd-clients-ca" I0216 17:15:26.450699 3556 keypair.go:195] Issuing new certificate: "etcd-peers-ca-main" I0216 17:15:26.470785 3556 keypair.go:195] Issuing new certificate: "etcd-manager-ca-main" I0216 17:15:26.531852 3556 keypair.go:195] Issuing new certificate: "etcd-peers-ca-events" I0216 17:15:26.551601 3556 keypair.go:195] Issuing new certificate: "apiserver-aggregator-ca" I0216 17:15:26.571834 3556 keypair.go:195] Issuing new certificate: "etcd-manager-ca-events" I0216 17:15:26.592090 3556 keypair.go:195] Issuing new certificate: "master" W0216 17:15:26.652894 3556 vfs_castore.go:604] CA private key was not found I0216 17:15:26.653013 3556 keypair.go:195] Issuing new certificate: "ca" I0216 17:15:31.344075 3556 executor.go:111] Tasks: 42 done / 83 total; 17 can run I0216 17:15:32.306125 3556 executor.go:111] Tasks: 59 done / 83 total; 18 can run I0216 17:15:34.189798 3556 executor.go:111] Tasks: 77 done / 83 total; 2 can run I0216 17:15:34.190464 3556 executor.go:111] Tasks: 79 done / 83 total; 2 can run I0216 17:15:35.738600 3556 executor.go:111] Tasks: 81 done / 83 total; 2 can run I0216 17:15:37.810100 3556 executor.go:111] Tasks: 83 done / 83 total; 0 can run I0216 17:15:37.904257 3556 update_cluster.go:313] Exporting kubecfg for cluster kOps has set your kubectl context to kOpsdemo1.k8s.local Cluster is starting. It should be ready in a few minutes. Suggestions: * validate cluster: kOps validate cluster --wait 10m * list nodes: kubectl get nodes --show-labels * ssh to the master: ssh -i ~/.ssh/id_rsa [email protected] * the ubuntu user is specific to Ubuntu. If not using Ubuntu please use the appropriate user based on your OS. * read about installing addons at: https://kOps.sigs.k8s.io/operations/addons.If you immediately check the Kubernetes nodes are running or not, you will get an error. You need to be a little patient and wait for a few minutes (5-10) till the cluster is created.

ubuntu@ip-161-32-38-140:~$ kubectl get nodes Unable to connect to the server: dial tcp: lookup api-kOpsdemo1-k8s-local-dason2-1001342368.eu-central-1.elb.amazonaws.com on 127.0.0.53:53: no such host - Validate the Cluster.

ubuntu@ip-161-32-38-140:~$ kOps validate cluster --wait 10m Validating cluster kOpsdemo1.k8s.local INSTANCE GROUPS NAME ROLE MACHINETYPE MIN MAX SUBNETS master-eu-central-1a Master t2.micro 1 1 eu-central-1a nodes-eu-central-1a Node t2.micro 1 1 eu-central-1a - List the Nodes and Pods to check if all the nodes are ready and running. You can see both master and node are ready status.

ubuntu@ip-161-32-38-140:~$ kubectl get nodes NAME STATUS ROLES AGE VERSION ip-172-20-39-172.eu-central-1.compute.internal Ready master 11m v1.19.7 ip-172-20-56-248.eu-central-1.compute.internal Ready node 6m58s v1.19.7 - You can check all the pods running in the Kubernetes cluster.

ubuntu@ip-161-32-38-140:~$ kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system dns-controller-8d8889c4b-xp9dl 1/1 Running 0 9m16s kube-system etcd-manager-events-ip-172-20-39-172.eu-central-1.compute.internal 1/1 Running 0 11m kube-system etcd-manager-main-ip-172-20-39-172.eu-central-1.compute.internal 1/1 Running 0 11m kube-system kOps-controller-9skdk 1/1 Running 3 8m51s kube-system kube-apiserver-ip-172-20-39-172.eu-central-1.compute.internal 2/2 Running 0 11m kube-system kube-controller-manager-ip-172-20-39-172.eu-central-1.compute.internal 1/1 Running 6 11m kube-system kube-dns-696cb84c7-g8nhb 3/3 Running 0 5m37s kube-system kube-dns-autoscaler-55f8f75459-zlxbr 1/1 Running 0 9m16s kube-system kube-proxy-ip-172-20-39-172.eu-central-1.compute.internal 1/1 Running 0 11m kube-system kube-proxy-ip-172-20-56-248.eu-central-1.compute.internal 1/1 Running 0 8m4s kube-system kube-scheduler-ip-172-20-39-172.eu-central-1.compute.internal 1/1 Running 5 11m

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialHow to Delete a Cluster

Just like creating a Kubernetes cluster, deleting a Kubernetes cluster also using kOps is very simple. This will remove all the cloud resources of the cluster and the cluster registry itself.

ubuntu@ip-161-32-38-140:~$ kOps delete cluster --name kOpsdemo1.k8s.local --yes

TYPE NAME ID

autoscaling-config master-eu-central-1a.masters.kOpsdemo1.k8s.local lt-0cc11aec1943204e4

autoscaling-config nodes-eu-central-1a.kOpsdemo1.k8s.local lt-0da65d2eaf6de9f5c

autoscaling-group master-eu-central-1a.masters.kOpsdemo1.k8s.local master-eu-central-1a.masters.kOpsdemo1.k8s.local

autoscaling-group nodes-eu-central-1a.kOpsdemo1.k8s.local nodes-eu-central-1a.kOpsdemo1.k8s.local

dhcp-options kOpsdemo1.k8s.local dopt-0403a0cbbfbc0c72b

iam-instance-profile masters.kOpsdemo1.k8s.local masters.kOpsdemo1.k8s.local

iam-instance-profile nodes.kOpsdemo1.k8s.local nodes.kOpsdemo1.k8s.local

iam-role masters.kOpsdemo1.k8s.local masters.kOpsdemo1.k8s.local

iam-role nodes.kOpsdemo1.k8s.local nodes.kOpsdemo1.k8s.local

instance master-eu-central-1a.masters.kOpsdemo1.k8s.local i-069c73f2c23eb502a

instance nodes-eu-central-1a.kOpsdemo1.k8s.local i-0401d6b0d4fc11e77

iam-instance-profile:nodes.kOpsdemo1.k8s.local ok

load-balancer:api-kOpsdemo1-k8s-local-dason2 ok

iam-instance-profile:masters.kOpsdemo1.k8s.local ok

iam-role:masters.kOpsdemo1.k8s.local ok

instance:i-069c73f2c23eb502a ok

autoscaling-group:nodes-eu-central-1a.kOpsdemo1.k8s.local ok

iam-role:nodes.kOpsdemo1.k8s.local ok

instance:i-0401d6b0d4fc11e77 ok

autoscaling-config:lt-0cc11aec1943204e4 ok

autoscaling-config:lt-0da65d2eaf6de9f5c ok

autoscaling-group:master-eu-central-1a.masters.kOpsdemo1.k8s.local ok

keypair:key-0e93f855f382a68fb ok

Deleted kubectl config for kOpsdemo1.k8s.local

Deleted cluster: "kOpsdemo1.k8s.local"

Conclusion

In this article, you’ve learned that kOps is a great tool to use for managing Kubernetes clusters hosted on various public cloud platforms because of the ways it can reduce the complexity. You’ve also learned the top kOps commands to know when working with Kubernetes, as well as and how to set up kubernetes containers on AWS.

FAQs

What is Kubernetes kOps?

Kubernetes kOps (Kubernetes Operations) is an open-source tool that automates the creation, management, and deletion of production-grade Kubernetes clusters. It is often described as “kubectl for clusters.”

Which cloud platforms does Kubernetes kOps support?

Kubernetes kOps officially supports AWS, with beta support for GCP, DigitalOcean, and OpenStack. It can also generate Terraform or CloudFormation files for provisioning infrastructure.

What are the key benefits of Kubernetes kOps?

Benefits include automated cluster provisioning, rolling updates, high availability deployments, instance group management, Terraform integration, and built-in cluster validation.

How do you set up a cluster with Kubernetes kOps?

You configure an S3 bucket for state storage, set environment variables, and use commands like kops create cluster, kops update cluster, and kops validate cluster to provision and manage Kubernetes clusters.

What are best practices when using Kubernetes kOps?

Best practices include running updates in preview mode before applying changes, enabling S3 versioning for state storage, using SSH key authentication, tagging resources for cost allocation, and validating clusters after deployment.

Try us

Experience automated K8s, GPU & AI workload resource optimization in action.