Primer: Cloud Resource Management

Chapter 2.1- Introduction: Guide to FinOps

- Chapter 1.1: How to Get Started with AWS Billing Tools

- Chapter 1.2: Best Practices for AWS Organizations

- Chapter 1.3: AWS Tagging: Best Practices for Cost Allocation

- Chapter 1.4: AWS Organizations: All You Need to Know

- Chapter 2.1: Primer: Cloud Resource Management

- Chapter 2.2: Best Practices: Cloud Optimization Savings

- Chapter 2.3: Cloud Computing Cost Savings: Best Practices

- Chapter 2.4: AWS Compute Optimizer

- Chapter 3.1: AWS Cost Management : Top 7 Challenges

- Chapter 3.2: AWS Cost Saving Steps

- Chapter 3.3: AWS Savings Plans

- Chapter 3.4: Spot Instances: A Complete Guide

- Chapter 4.1: The 4 Fundamental Concepts for Enforcing Cloud Cost Management

- Chapter 4.2: Top 10 FinOps Best Practices

- Chapter 4.3: Drilldown: AWS Budgets vs Cost Explorer

- Chapter 4.4: AWS Cost Categories

When it comes to selecting the appropriate type and size of cloud computing resources, the saying “one man’s trash is another man’s treasure” rings true. How is that so? Well, to someone on the finance team, excess resources should be discarded instead of kept on as pricy line-items against the company’s budget. But to site reliability engineers, that “excess” in resources represents safety; the extra headroom provides computing space for the unknown unknown. This dichotomy is at the core of the challenges that lie in right-sizing and resource optimization. Further, cloud infrastructure has become so flexible and agile compared to physical server infrastructure that organizations are allowing many more individuals and developers to “serve themselves.” This is remarkably different from the prior, tightly controlled procurement and provision process from the not-so-distant past. The majority of the self servers are generally not concerned with cost efficiency, rather performance and responding to the needs of the business.

When right-sizing, some instances may require an increase in resources to remove a performance bottleneck, while other instances may have an excess of resources. In this article, we focus on cloud resource management, particularly on managing waste which is the main consideration for FinOps practitioners. When it comes to wasted cloud computing resources, there are two categories: the unused and the underutilized. There are other distinctions, too. For example, right-sizing can take place over the course of weeks, months, or in real-time; changes can be performed either manually or through automation. These concepts apply to hosts, virtual machines, storage, networks, containers, and even serverless computing.

This is also the area of cloud cost optimization that holds the highest level of mathematical and logistical complexity—requiring both algorithms and automation to solve. A mistake in a production environment can lead to an application outage, making the stakes high. The high level of interdependencies between decisions also contributes to the complexity. For example, recommendation algorithms must be sophisticated enough to consider an organization’s prior purchasing commitments made to specific instance families, types, sizes—also known as reservations—before recommending new configurations.

Cloud Resource management challenges

There are many challenges in identifying underused computing resources and recommending the correct capacity. We have identified four major challenge areas:

- Identifying what to measure by considering all aspects of computing resources

- Knowing how to measure it by dealing with data patterns and aggregation

- Finding the right configuration by selecting the correct type and size

- Applying advanced right-sizing techniques such as auto-scaling

1. Identifying what to measure

The process of identifying waste begins with measurement. This seems simple on the surface, but proves to be complex. Most administrators are used to measuring the resources of a host, virtual machine, or container based on its CPU usage. The more experienced infrastructure managers also account for memory consumption as an ongoing measurement. However, in an AWS environment, the memory usage metric is not measured automatically by CloudWatch, so engineering managers must install agents on their hosts in order to collect it.

In fact, only a small percentage of operations managers adopt a consistent measurement of ancillary resources (beyond CPU and memory). This lack of measurement often causes performance bottlenecks that prove difficult to diagnose.

Here are a few examples of such resources:

Network Ports

Network ports attached to servers have fixed bandwidth ratings and must be thoughtfully chosen to avoid bottlenecks. The most common speeds are:

- 100 Megabits per second

- 1 Gigabit per second (1000 Megabits)

- 10 Gigabit per second (10,000 Megabits)

As an example, high frequency stock trading is an application that requires low latency networks to stream data required to process trades in milliseconds. This is a scenario where you simply can’t ignore a networking bottleneck. There are many other applications that depend on network speed to a varying degree.

Block Storage

Block storage is the equivalent of a hard drive attached to a host. Its capacity is not simply measured in terms of storage space, but also in terms of its capacity to process input/output-operations-per-second (IOPS). AWS offers many types of EBS volumes, with various levels of IOPS performance—including selectable IOPS performance known as “provisioned IOPS”—that must be considered as part of the provisioning process.

The performance of a database is often directly proportionate to the number of input and output operations that its underlying computing platform can provide. This is why IOPS is a critical resource to consider in capacity planning.

Maximum IOPS Throughput Capacity

The maximum IOPS throughput capacity of an EC2 instance (AWS terminology for a virtual machine) is an often neglected consideration during a capacity engineering exercise. Each EC2 instance has a limited capacity in its backplane. This means that each virtual machine or container ultimately runs on hardware, and each server is designed with limitations regarding how fast it can move the bits and bytes between its CPU, memory, attached storage, and network card. So the maximum throughput capacity of the EC2 instance must be at least as high as the aggregated throughput of its attached EBS volumes to avoid a bottleneck.

Apps & Middleware

Even though we are focused on infrastructure computing resources in this article, you must be mindful of the fact that applications and middleware technologies, such as databases and messaging buses, should also be monitored and measured. Although some metrics are available from AWS CloudWatch for services provided by AWS, such as Relational Data Service (RDS), administrators are still responsible for installing third-party agents and tools to monitor their own application components installed on EC2 instances and containers.

2. Knowing how to measure

How to measure usage is almost as complex as what to measure. This is because workloads have both cyclical and non-cyclical patterns, with measurements collected as time-series data samples that must then be aggregated and interpreted. Another complicating factor is that idle, unused, overprovisioned, and standby resources are not always easy to distinguish. Let us use a closer look with examples to clarify these points.

Usage Patterns

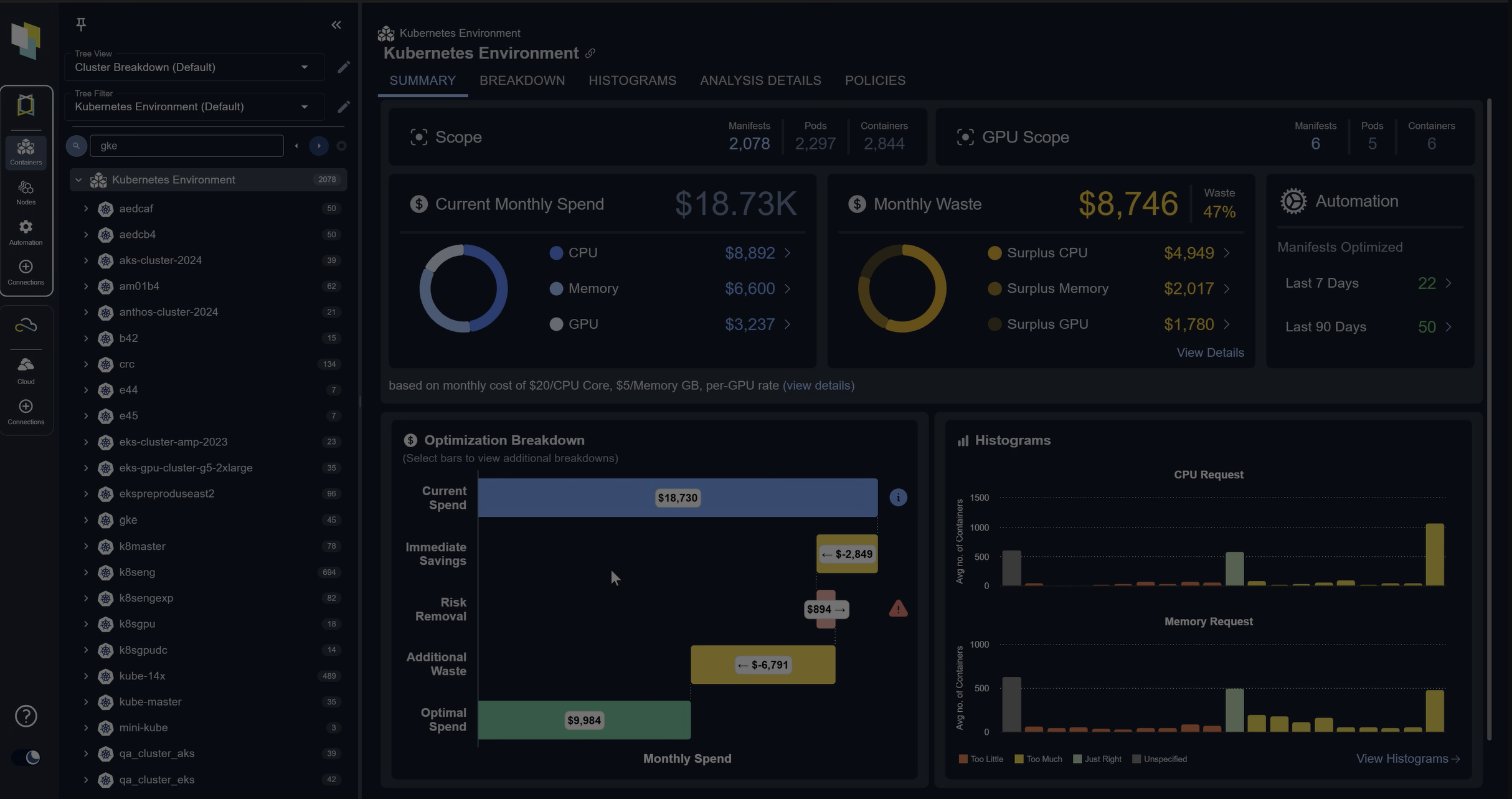

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialEnd-users drive workload patterns based on their usage habits. For example, workloads are low in the early morning and late night hours, and peak around 10am in the morning hours, and 2pm in the afternoons. This behavior, however, is meaningful only in local times. This means that end users in Asia will wake up and start using the online applications just as the users in North America go to sleep. All of this creates an amalgamated pattern of cyclical daily traffic.

There are also weekly patterns that are affected by weekends, holidays, and special events that affect retail shopping sites (for example, Black Friday or Mother’s Day). The implication here is that capacity planning must account for predictable peaks and valleys, but also for unpredictable spikes that may be caused by infrastructure outages or by special events.

Data Aggregation

If you measure your memory usage to be 30% on average over the last day, for example, this wouldn’t account for the fact that you were using 100% of your CPU during the two peak usage hours. This causes your application to slow down when performance matters the most. The same phenomenon applies to aggregating sub-second measurements into one minute. If your storage system is hitting max IOPS throughput for only a few seconds, or even milliseconds, database slowness (at a transaction level) is still happening, but it might go undetected when looking at data aggregated by the minute.

This is why the proper practice of performance management requires the aggregation of time-series data into values beyond simple mean or average. Measurements such as median, minimum, maximum, 95 percentile, 5 percentile must be calculated and stored at a granular level to be meaningful. Let’s use the 95th percentile for illustration. Suppose that you are measuring the values of CPU usage every second and aggregating that data hourly. You would have 3600 data samples; the 95 percentile represents the value which is greater than 95% of your 3600 sample points. The 95th percentile helps reveal any short-lived CPU spikes that would otherwise be washed out in the process of averaging all 3600 samples into a single number.

Unused & Underutilized Resources

You might expect recognizing an idle host is straightforward, but that is not always the case. Let’s look at a few reasons why. For one, a host may be unused but still accrue CPU and memory usage due to scheduled utility services such as backups, virus scans, and automated software upgrades. In this case, you wouldn’t find an item with zero resource utilization on your weekly capacity reports to use as a proof of idleness. Instead, you would be forced to investigate your resources more thoroughly.

On the other hand, you may find hosts with very little CPU and memory usage that are critical to your environments proper functioning. An example of this would be a Domain Name System (DNS) used to translate domain names (such as www.google.com) into IP addresses for your computer to access over the network. A DNS server may have very little to no activity for a few days, but it is still essential for accessing the internet as you type www.google.com in your browser, so it must be preserved, albeit with with minimal computing resources—and can’t be simply decommissioned

Servers on hot standby are another example; high-availability architectures may require one or more servers to be ready to process application workloads in the event of primary server failure. In such a scenario, the servers are idle but should not be decommissioned.

As you can see, distinguishing between unused and underutilized nodes is not always obvious.

3. Finding the right configuration

Once you have analyzed all of the computing resources required by a workload (based on its historic usage patterns), the next step is to recommend a new host instance type and size that would be a better match. However, there are over one million EC2 SKUs alone to choose from when every combination is accounted for. This is because EC2s have dozens of attributes that define them, such as the list below to name a few:

- Family (memory or CPU intensive)

- Type (C5 or T3)

- Size (Small or XL)

- Region

- Availability zone

- Operating System (Linux vs. Windows)

- Licensing type (bring your own Windows license)

- Purchasing plan (on-demand, reserved or spot instances).

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialNew instance types are released on a monthly basis, regularly expanding this list of SKUs. To give you some perspective on its rate of growth, the list of EC2 SKUs provided by AWS via a programmatic API call increased from about 200,000 four years ago to over a 1,000,000 today.

Finding the appropriate EC2 requires setting constraints based on your specific workload requirements. For example, a workload that requires 20,000 IOPS must be placed on an EC2 that can support that level of IOPS at a minimum. By consulting the Amazon EBS-optimized instances page, which outlines the maximum IOPS throughput ratings by EC2, you will notice that an a1.medium EC2 can support 20,000 IOPS and a C4.large can only support 4,000 IOPS.

As you can see, because of the sheer volume of instance iterations, the job of right-sizing an EC2 is to algorithmically comb through hundreds of thousands of configurations to select the right one when based on a couple of dozens constraints, followed by selecting the least expensive configuration that matches all of the criteria.

4. Applying advanced right-sizing techniques

Risk Tolerance

Applications can be categorized by their level of tolerance for risk. The distinction is usually based on the use case. For example, the systems used in a lab environment have the highest tolerance for downtime, while computing resources used in testing have less tolerance for risk. Based on the same logic, resources supporting second-tier applications (where an outage doesn’t result in an immediate loss of revenue) have even less tolerance for risk, and finally the infrastructure supporting live customer applications have no tolerance for an outage. In right sizing, the risk tolerance translates into configurable constraints used in formulating a recommendation. For example, a server supporting a mission-critical application should be constrained to use “maximum” as a method for aggregating its historic utilization data. This means that the recommended instance type and size should be able to support the largest spike in utilization based on its historic workload. Meanwhile, a system with high tolerance for risk could be sized based on a high watermark established by the 75 percentile method of data aggregation. This means that we can accept some slowness during times of peak usage in exchange for additional savings. Sophisticated third-party tools allow users to create policies—which act as a contract to periodically adjust supply of computing resources to match a diverging level of demand – so that capacity engineers can guarantee sufficient resources for critical application workloads with constant manual intervention.

Real-time right-sizing

So far in this article, we explained the process of collecting and analyzing relevant metrics, as well as selecting the right type and size of resource based on analysis. Typically, this process may be repeated on a monthly basis to ensure that our environment is not grossly over or under-provisioned. However, the ultimate promise of public cloud as a computing utility platform is to charge only for what you use. This requires computing resources to be allocated in real-time, based on workload needs that change over seconds or minutes.

Real-time resource allocation requires applications to be either conceived or refactored based on an architecture that allows the distribution of workload across a cluster of computing nodes (whether virtual machines or containers). This allows the cluster to scale “horizontally” by adding more nodes during peak usage and removing them when they are no longer required. As an example, AWS offers autoscaling for its various cloud services ranging from EC2, to container services, and database technologies.

This more sophisticated approach to resource allocation has its own engineering challenges. For example, cloud administrators must still choose the appropriate type and size for each node in the auto-scaling cluster to match the application workload requirements. The administrators are also responsible for configuring the auto-scaling rules that increase and decrease the node count in the cluster based on a defined set of criteria. Another example is the need for pre-scaling the cluster based on historic seasonal workload patterns to mitigate the risk of resources not being available in the desired AWS region during peak usage hours.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialLayers of scaling

Up to this point, our examples mostly focused on adding CPU, memory, IOPS or networking bandwidth to an EC2 which is considered a virtual machine. However, popular new technologies such as Kubernetes are designed to host containers with multiple layers of scalable computing resources. For instance, a Kubernetes cluster has not only nodes (virtual machines), but also pods (container groups hosted nodes), and finally containers that are provisioned within the pods. This means that administrators can benefit from dynamic allocation of containers as long as the level of computing resource requests and limits are configured appropriately in advance. The result is a more automated scaling of resources at the cost of some additional complexity. We have created a separate technical educational guide to help administrators better understand the tooling and best practices necessary to optimize Kubernetes clusters.

Right-sizing serverless computing

Cloud providers have outsourced the ownership and management of data centers, server hardware, virtual machines, and containers (for the sake of simplicity, you can think of containers as lightweight virtual machines). Lately, they have introduced serverless computing services.

Serverless computing services work by sharing your code with your cloud provider; each time your code runs, you are then charged a fee. A simplistic conclusion may be to think that the services are always sized correctly and that right-sizing is no longer relevant. However, upon a closer look, the serverless services such as AWS Lambda charge by the amount of requested memory and runtime duration—meaning that you must decide how much memory your code requires each time it is invoked, and you are charged based on how long the function runs (measured in milliseconds). The more memory you request, the more CPU is proportionately and automatically assigned by AWS.

A typical initial saving strategy is to identify the Lambda functions that are not consuming all of their provisioned memory and removing the excess capacity. This may seem logical on the surface. However, as the memory allocation is reduced, the CPU allocation proportionately decreases as well, and so the function takes longer to run, thereby costing more. In the case of a CPU-intensive application—as counter-intuitive as it may seem—optimal spending may be achieved by over-allocating memory (to have more CPU power which in turn reduces the runtime).. Finding the perfect balance requires an algorithmic analysis to identify the optimal point of memory allocation that allows the code to run fast, while not being over-provisioned.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialFinal Thoughts

The topics in this guide are designed to help FinOps practitioners become familiar with the complexities of cost optimization in public cloud computing, beginning with a focus on the AWS platform due to its popularity. All of the articles outlining our methodology are part of a continuous motion that starts with organizing your resources, which leads to improving your purchasing plans, which in turn leads to reconciling your forecasted budgets against your extended team’s monthly spending.

That being said, the most complex step in this journey of cost optimization is resource optimization, which requires specialized technologies and processes to ensure a successful outcome. In the next article, we introduce our best practices we’ve learned by collaborating with system administrators during hundreds of engagements over the years.

FAQs

What is FinOps cloud optimization?

FinOps cloud optimization is the practice of aligning financial accountability with cloud resource usage. It ensures that teams balance performance, cost, and efficiency by rightsizing workloads, automating scaling, and monitoring spend.

What are the main challenges of FinOps cloud management?

The biggest challenges include identifying what resources to measure, interpreting complex usage patterns, finding the right configurations among thousands of options, and applying advanced techniques like auto-scaling or serverless optimization.

How do FinOps practitioners identify waste in the cloud?

Waste is typically classified as unused or underutilized resources. FinOps practitioners analyze metrics such as CPU, memory, IOPS, and network throughput to determine whether resources are over-provisioned or idle.

How does automation support FinOps cloud practices?

Automation tools analyze workload metrics, apply rightsizing recommendations, and manage scaling in real time. This reduces manual effort, prevents errors, and ensures resources are continuously optimized for cost and performance.

Is serverless computing automatically optimized in the FinOps cloud model?

Not always. In AWS Lambda, for example, cost depends on memory and runtime. Over- or under-allocating memory affects CPU performance and execution time. FinOps cloud strategies use algorithmic analysis to find the optimal balance.

Try us

Experience automated K8s, GPU & AI workload resource optimization in action.