So you’ve decided to go with a hybrid cloud approach, splitting workloads among on-premises VMware or Hyper-V virtual infrastructure and public cloud platforms. Do it right and you can get the benefits you’re looking for of increasing agility while removing the burden of managing servers. But get the mix wrong and you could end up with a lot of expensive unused capacity on your data center floors and excessive OPEX in the cloud (AWS, Azure, IBM Cloud)—or worse, application performance and compliance issues.

The fact is, figuring out the best place to host your applications and their workloads in a hybrid cloud environment is really complex. There are a lot of business and technical configuration requirements, alongside the detailed workload patterns to consider. Certain workload types will cost you more, while others workload types aren’t really suited for public cloud. With public cloud “t-shirt” sizing models, instances are generally chosen to meet peak utilization. So you may pay for a “Large” but only use that capacity occasionally, with no ability to share the spare resources with other workloads. If you also consider Bare Metal options, such as SoftLayer Bare Metal or the impending release of VMware on AWS, then resource sharing is possible between workloads (through overcommit), further complicating the decision. Going with dedicated bare metal cloud options gives you greater flexibility, but you’ll need to carefully place VMs to dovetail workloads to make the most cost-efficient use of the capacity you’re paying for. That requires a detailed understanding of each application’s requirements and historical workload patterns. Which most IT organizations just don’t have.

How can you navigate this complexity and chart a path to hybrid cloud that maximizes your resources while minimizing risk?

Make like a consultant and follow a proven methodology

There is value in looking at what global consultancies do for cloud transformation and learning from those standardized methodologies. The ones based on the right analytics will enable you to optimize hosting decisions and resource allocations in ways that can save literally hundreds of thousands to millions of dollars annually. This end-to-end process consists of four key steps that build on each other:

1. Candidate Qualification

You need to start with automated data collection that drives an in-depth understanding of the key factors that drive resource allocation decisions: utilization and workload patterns over time, technical requirements for each application, and requirements for specific types of business data, such as data governed by SLAs or security policies. Analyzing this is crucial for characterizing workload profiles and thereby determining which ones are good candidates for specific clouds and which may be best kept on-premise.

Trying to perform this analysis manually with spreadsheets would be incredibly time-consuming and error-prone. Leveraging analytics purpose-built for this task saves time and effort while ensuring accuracy.

Read more on Candidate Qualification here.

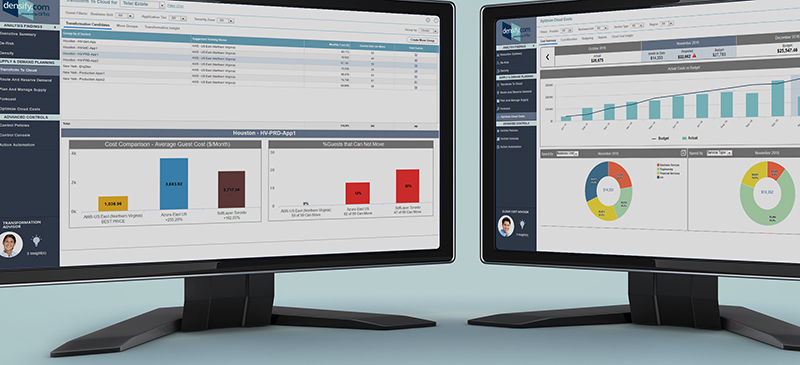

2. Assessment of Migration Scenarios

Based on candidate qualification in the previous step, you then assess which specific cloud environments—IaaS, cloud containers or bare metal are best for your application groups. This involves analyzing a range of scenarios, including not only different hosting providers but also different models, such as public cloud vs. containers vs. bare metal vs. on-premise. Once the optimal hosting environments are determined for your various app groups, you can start defining define “move groups” that take into account all of the detailed requirements analyzed in the first step, to logically group workloads to move together during the actual execution phase. This step also involves creating a detailed budget, placement and resource allocation plan that includes sequencing migration waves in ways that make the most sense from a technical, business and budget perspective.

Making use of analytics to assess the full range of scenarios in light of specific application and data requirements is essential for precisely planning workload placements based on complex sizing and cost considerations.

3. Execution of the Plan

Now you’re ready to put your migration plan into action. It is critical that step goes beyond executing on the actual move over to cloud, and include assessing workloads after they enter target environments to make sure they “land” safely. Here again, analytics play a key role, automatically fine-tuning capacity allocation based on how the workloads actually behave. Analytics can also be used to predict capacity shortfalls or free capacity down the road—critical insight for cloud infrastructure budget planning.

4. Continuous Optimization

The migration is a success and now you’re done, right? Not quite. Best migration practices include continuous analysis and “densification” to ensure you’re always making optimal use of on-premise and cloud capacity resources while effectively managing application risk. Often over-looked are bare metal cloud placements, where you have the flexibility to reallocate workloads to any unused capacity. These environments, which will increase in popularity with the introduction of VMware on AWS later this year, require an analytics platform that continuously and automatically places workloads to achieving the greatest cost efficiency, while making sure applications don’t conflict with other.

Following this four-step transformation process—and leveraging analytics throughout— and making decisions based on facts derived from deep analysis will enable you to keep your job while also driving the greatest cost benefits with the lowest risk. Like the lessons learned from virtualization planning, spreadsheets and homegrown models simply aren’t up to the task. And it’s important to ask your service provider exactly how they are analyzing all the options out there to be sure you are getting the best possible answers.

In subsequent posts, we’ll dive into each of these steps in a bit more detail to explore how the right analytical approach is crucial for successful hybrid cloud transformation.