For those of you who aren’t familiar with AWS CodePipeline, it’s a continuous integration and continuous delivery (CI/CD) framework that enables application development teams to deliver code updates more frequently and reliably. You may have also heard it being called a CI/CD or DevOps pipeline.

These pipelines have always traditionally been used to deploy the components of a certain application whenever new code in “checked-in”. Isn’t the underlying infrastructure just as important and subject to change with every iteration of new code? Well… maybe not with every iteration, but infrastructure changes can occur especially if your FinOps strategy includes continuous optimization.

In my previous article, we looked at how you can build the technical components of a continuous optimization strategy that runs in parallel with your CI/CD pipeline. In this article, we are going to look at how to approach incorporating continuous optimization of infrastructure directly into a CI/CD pipeline. Once implemented, whenever code is checked in, the pipeline will auto-adjust infrastructure resource specifications (if needed). The adjustments should be calculated based on demand by analyzing various application and infrastructure metrics.

Typical Implementation with No Optimization

| Pipeline Components | Role |

|---|---|

| AWS CodeCommit | A managed source control service that can host secure Git-based repositories. Invoked by uploading changes to your repository it can then subsequently trigger the next stage of your CI/CD pipeline. |

| AWS CodeBuild | A managed continuous integration (CI) service that complies source code, runs tests and produces software packages that are ready to deploy. |

| AWS CodeDeploy | A managed continuous integration (CI) service that complies source code, runs tests and produces software packages that are ready to deploy. |

Typically, the infrastructure to support an application is deployed either manually or using infrastructure as code (IaC). IaC is a group of technologies that enable you to deploy, modify or destroy infrastructure through declarative coding which can then be source controlled. Examples include, AWS CloudFormation, Azure ARM, Google Deployment Manager, Terraform, etc. The use of IaC is widespread and considered a best practice for managing infrastructure, particularly in public cloud.

Once the infrastructure is available, then the pipeline is configured to continuously deploy new iterations of code as it becomes available. At no point is the pipeline adjusting the infrastructure for size based on utilization and therefore as the application evolves it will eventually end up in an over-provisioned or under-provisioned state. This is simply because of the inevitability of demand pattern changes over time.

Some of you are probably thinking, well the developer can always adjust the resource spec in the IaC template whenever adjustments are needed. This isn’t as easy as it sounds nor is it practical for large scale environments for a multitude of reasons. It also distracts the developer from their core responsibility of delivering products to their customers.

Finding a way to continuously adjust the infrastructure resources according to demand over the life of the application guarantees no compromises on performance while ensuring no overspend.

Let’s see if we can extend the capability of our basic pipeline:

Design Considerations

Infrastructure as Code (IaC)

Any sort of automated optimization is going to require integration to the IaC technology that was used to deploy the infrastructure. In fact, this IaC will be directly responsible for implementing the change, thereby ensuring that no infrastructure drift is introduced.

So, when the pipeline is executed it will invoke the IaC at some point to adjust the infrastructure according to a new set of specifications. I’m sure a lot of you know how to accomplish this. With AWS CodePipeline and AWS CloudFormation, it’s particularly trivial as native integration is available directly from AWS CodeDeploy.

We will use CloudFormation as part of our implementation for this article.

Parameter Repository

There are specific infrastructure resource parameters within the CloudFormation template that control resource allocation. For our solution these parameters are considered transient and because manual modification of the CloudFormation template (CFT) whenever transient parameters change isn’t scalable or suitable for an automated approach, we turn to a parameter repository (key-value store) to hold versions of these parameters. CloudFormation will automatically reference these parameters within Parameter Store at runtime.

We will use AWS Parameter Store as part of our implementation for this article.

Note that this is considered a general best practice.

Identifying Optimal Resource Specs

You need an automated mechanism to identify optimal resource specs for the infrastructure your application is running on. Remember, precision is king! Ideally these specifications should already be available within your parameter repository when new code is checked in.

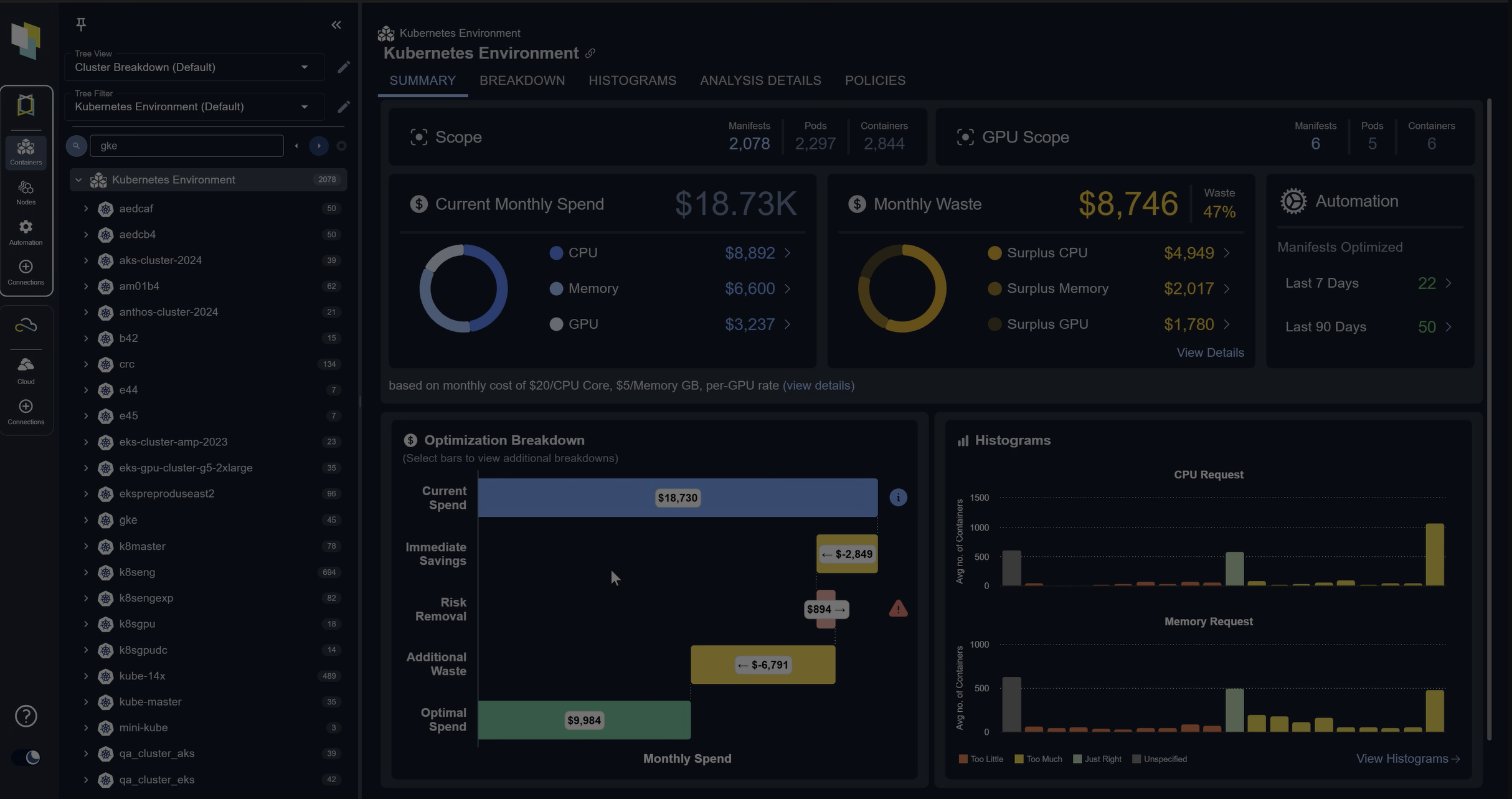

For our example we will use Densify as our optimization engine.

CloudFormation Access to Insights

Before CloudFormation can implement any changes, it needs to know what changes to implement. We already know that this information is stored in our parameter repo, as described above. So, when is this information extracted and when is it made available to CloudFormation?

Here are a few approaches that we can take:

| Approach | Description | Advantages | Disadvantages |

|---|---|---|---|

| Pass-In-Key | The CI solution needs to identify the specific key holding the optimal resource specs for each resource. It can then invoke CloudFormation and pass in those keys. CloudFormation would subsequently perform the lookup. | No need for a CloudFormation lookup plugin. |

|

| Pass-In-Value | Similar to Pass-In-Key, except the CI will also perform the parameter repo lookup to extract the optimal value. These values are passed into CloudFormation at invocation. |

|

|

| Hard-Coded Key | The parameter keys can be hard coded directly into the CloudFormation template. | No need for a CloudFormation lookup plugin. |

|

| Direct Reference | The CI solution can invoke CloudFormation without providing any input parameters. CloudFormation will identify the specific keys and perform the lookup. |

|

Requires a CloudFormation lookup plugin. |

My personal preference is direct reference as this approach is simpler to implement (provided you have the lookup function) and will require no customization to the continuous integration (CI) stack.

Putting it Together

In the diagram below, we can see that once the new code is built and ready to deploy, the pipeline executes a sequential two-stage process.

- Prior to deploying the app, call CloudFormation to implement any changes to the infrastructure resources. This is analogous to triggering a CloudFormation stack-update or a Terraform apply. If any issues were to occur, CloudFormation should roll-back any changes.

- Execute any existing application deploy steps.

The key to this direct reference solution is to have CloudFormation perform all the work. The CI stack simply needs to invoke CloudFormation with the correct template for the application being deployed.

Let’s look at how we configure CloudFormation to action the necessary steps.

LastUpdatedTimestamp

We need to introduce 1 input parameter into every CloudFormation template. This parameter will control whether the CloudFormation stack is able to execute an update by enabling the creation of a changeset. As changes are extracted at runtime by the lookup function, they are not visible topically in the template, therefore CloudFormation is not aware any changes exist until it runs. The LastUpdatedTimestamp, as the name suggests would be different from the previous run and therefore CloudFormation would see that as a change and subsequently execute an update.

LastUpdatedTimestamp:

Type: AWS::SSM::Parameter::Value<String>

Default: '/densify/config/lastUpdatedTimestamp'The Lookup Plugin

This plugin is implemented as a Lambda function and defined in the template as a custom resource which currently supports extraction the of 2 different type of specs.

- EC2 Instance Type

- /densify/iaas/ec2/instance_id/instance_name/instancetype

- RDS DBInstanceClass

- /densify/iaas/rds/DBInstanceIdentifier/DBName/instancetype

When CloudFormation is executed, the plugin is invoked to perform an extraction of these parameters for the relevant instances within parameter store. They are made available to the stack as attributes that can later be referenced.

What if the parameter repo is down or the parameters are not populated? In this case the plugin will perform a lookup of the specific specs on the running instances and return those.

What if there are no running instances because this is the first time this template is executing? In this case the plugin will return the default settings as described in its properties.

Let’s look at some of the properties of this plugin.

- ServiceToken – This input informs CloudFormation which Lambda function is supporting this plugin.

- StackName – The Lambda function will need to read the CloudFormation stack properties and therefore we pass in the name to help with the lookup.

- LastRefreshTime – This is the parameter that we introduced above. As this will be different whenever an update exists, CloudFormation will see that as a change and execute the stack.

- The remaining parameters are default settings for our resources. You can set them as ‘LogicalResourceName’.’PropertyToOptimize’: <default_value>.

DensifyLookupPlugin:

Type: Custom::DensifyLookupPlugin

Properties:

ServiceToken: !Join [ ":", [ 'arn:aws:lambda', !Ref 'AWS::Region', !Ref 'AWS::AccountId', 'function:DensifyResourceProvider' ] ]

StackName: !Ref 'AWS::StackName'

LastRefreshTime: !Ref 'LastUpdatedTimestamp'

ComputeInstance.InstanceType: 'c5.9xlarge'

DatabaseInstance.DBInstanceClass: 'db.m5.large'Resource Updates

For each resource, we need to update the specific parameter we intend to optimize:

- EC2 Instance

- InstanceType: !GetAtt DensifyLookupPlugin.ComputeInstance.InstanceType

- RDS Instance

- DBInstanceClass: !GetAtt DensifyLookupPlugin.DatabaseInstance.DBInstanceClass

- Format

- <LogicalNameOfPlugin>.<LogicalNameOfResource>.<PropertyToOptimize>

Full Sample Template

AWSTemplateFormatVersion: '2010-09-09'

Description: >-

This Cloudformation template deploys two simple EC2 instances.

Metadata:

Version: 1.0

Parameters:

LastUpdatedTimestamp:

Type: AWS::SSM::Parameter::Value<String>

Default: '/densify/config/lastUpdatedTimestamp'

Resources:

ComputeInstance:

Type: AWS::EC2::Instance

Properties:

InstanceType: !GetAtt DensifyLookupPlugin.ComputeInstance.InstanceType

IamInstanceProfile: 'CodeDeployDemo-EC2-Instance-Profile'

SecurityGroups:

- !Ref 'InstanceSecurityGroup'

KeyName: EC2KeyPair

ImageId: ami-02b5cd5aa444bee23

Tags:

- Key: Name

Value: WebServer

- Key: Application

Value: DevOpsPipelineDemo1

DatabaseInstance:

Type: "AWS::RDS::DBInstance"

Properties:

DBInstanceClass: !GetAtt DensifyLookupPlugin.DatabaseInstance.DBInstanceClass

Tags:

- Key: Name

Value: Database

- Key: Application

Value: DevOpsPipelineDemo1

InstanceSecurityGroup:

Type: AWS::EC2::SecurityGroup

Properties:

GroupDescription: Full Access

SecurityGroupIngress:

- IpProtocol: -1

FromPort: -1

ToPort: -1

CidrIp: '0.0.0.0/0'

ElasticIP:

Type: AWS::EC2::EIP

Properties:

InstanceId: !Ref 'ComputeInstance'

DensifyLookupPlugin:

Type: Custom::DensifyLookupPlugin

Properties:

ServiceToken: !Join [ ":", [ 'arn:aws:lambda', !Ref 'AWS::Region', !Ref 'AWS::AccountId', 'function:DensifyResourceProvider' ] ]

StackName: !Ref 'AWS::StackName'

LastRefreshTime: !Ref 'LastUpdatedTimestamp'

ComputeInstance.InstanceType: 'c5.9xlarge'

DatabaseInstance.InstanceType: 'm4.large'It’s worth noting that resource changes to infrastructure services typically involves a restart of the said service. This is especially true for compute services like EC2. Whether your changing instance size or instance family, a restart is required. With that said, it’s important to ensure any service daemons, applications, etc can auto-start on boot-up.

Conclusion

We covered an implementation paradigm where we tie infrastructure management directly to the CI/CD pipeline and therefore whenever new code is checked in the infrastructure is checked and optimized (if needed). To help illustrate, we showed a potential implementation using a mix of AWS technologies and Densify. However, the market is vast and there are countless different tools that can be used to implement this solution. Depending on which set of tools you currently have in your ecosystem, you can use the approach described in this article to build continuous optimization directly into your pipeline.