Cloud Computing brings New Benefits & Challenges to Infrastructure Management

Cloud computing has fundamentally changed the way enterprises think about information technology infrastructure. A decade ago, IT professionals would make projections about the number and types of servers they would need for the next several months to a year. In some cases, they’d plan for average load, while in other scenarios they would buy for peak capacity to ensure there were sufficient resources when needed.

Cloud computing comes in a few broad forms: infrastructure as a service (IaaS), patform as a Service (PaaS), and software as a service (SaaS):

- IaaS is the form of cloud computing that provides virtual machines (VMs), containers, storage, and supporting networking to users who need maximum control over resources.

- PaaS is a service that doesn’t require users to provision and configure VMs; instead, users run their programs on a scalable platform that’s managed by a cloud vendor. Google Cloud’s App Engine was the first PaaS.

- SaaS, is a cloud offering that provides a specific software service, such as Salesforce, which provides customer relationship management and related software.

Cloud computing, however, reduces the need to precisely forecast future needs for variable workloads (stable workloads can take advantage of committed use offerings such as Reserved Instances). Instead, resources are allocated on-demand, according to the workloads at a point in time. In addition, the rise of containers, which virtualize an operating system so multiple workloads can run on the same server without interfering with each other, has improved server utilization.

But, despite these advances, enterprises still face challenges in using these resources efficiently.

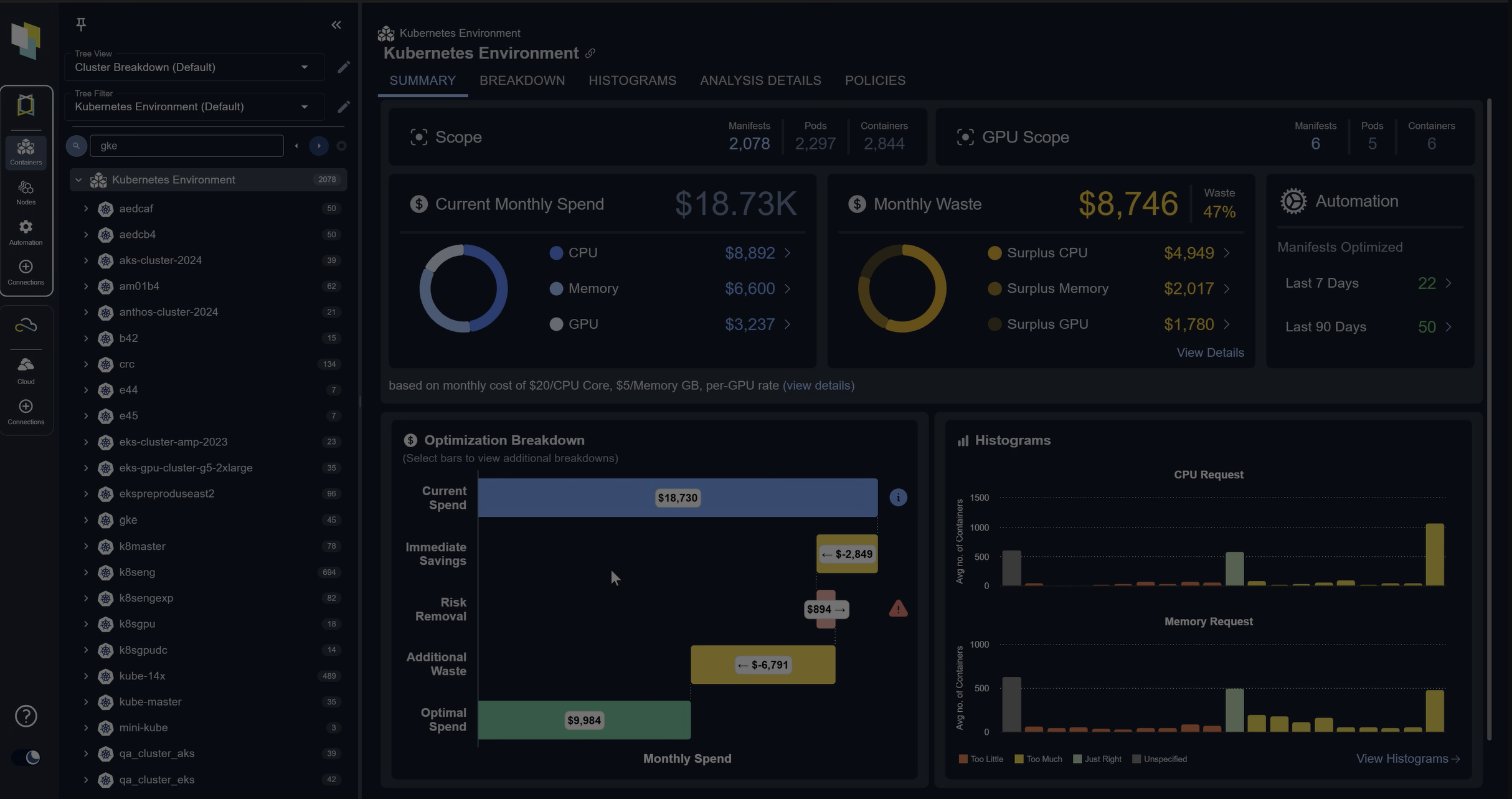

There are a number of challenges when it comes to meeting service-level agreements (SLAs) while also controlling cloud costs, including:

- Resources could be under provisioned, and that can introduce application performance risks, including a failure to meet SLAs

- Resources may be overprovisioned, resulting in wasted resources and higher costs

- A lack of automation can create inefficiencies because of lack of control in the selection of cloud and container resources

- It can be difficult to determine how best to purchase cloud services (for example, on-demand vs. reserved instances)

Challenge 1: Agility vs. Cost Management

Perhaps the most important way cloud computing has done that is by shifting IT spending from a mix of operational expenditures (OpEx or OPEX) and capital expenditures (CapEx or CAPEX) to a more singular focus on OpEx. Instead of procuring servers with a three-year lifespan, IT professionals are buying access to computing resources by the minute. This approach allows developers to allocate compute resources as they need, or at least as they perceive a need.

Infrastructure and operations professionals serve two constituencies: developers who are using the resources those professionals manage, and IT finance departments that allocate budgets and set limits on the funds available for cloud resources. Developers, as a rule, are more concerned about having access to necessary resources than about the cost of those resources. Of course, developers are not ignorant or dismissive of cost considerations, but their primary job is to develop software and run it in production.

There are many benefits of allocating resources as needed. Developers can spin up test environments in minutes, which is a key enabler of agile development. They can routinely leverage Blue/Green Deployments to roll out new services while mitigating the risk of disrupting production services. Blue/Green Deployment is a technique that reduces downtime and risk by running two identical production environments, called blue and green. Workload is gradually routed to the new version of code until no load is routed to the old version of the code.

But allocating resources as needed can also lead to a pattern identified by Densify as uncontrolled micropurchasing—that is, suboptimal resourcing decisions that are innocuous individually, but in aggregate can become significant additional costs. Unlike being able to spot a long-running VM that should have been shut down, inefficient micropurchases are almost stealth unless your tools are designed specifically for providing transparency into those suboptimal purchases.

Similarly, IT finance professionals are not oblivious to the needs of developers, but their job is to optimize how IT operations funds are spent. This leaves infrastructure and operations pros trying to balance performance and cost. This can create tensions and complexities within an organization, especially around inefficient cloud expenditures.

Challenge 2: Risks from Micropurchasing

Developers automate as much of the integration and deployment process as possible. Part of this entails creating scripts or templates for provisioning needed infrastructure. This is known as infrastructure as code (IaC), and it can significantly improve the quality of resource provisioning. By running code, instead of manually executing commands, engineers reduce the risk of misconfiguring servers and containers.

There is, however, a downside. Using IaC, developers can quickly provision resources. Without controls and governance, this can lead to a substantial amount of micropurchasing (as noted previously). If micropurchasing is done outside established procedures, it may be hard to track the provisioned resources. For example, a relatively junior engineer can deploy resources and incur costs that may be individually small, but in aggregate can become a problem for your budget. This is an example of a “dark spend” in which the enterprise is incurring costs but doesn’t have sufficient visibility into why those costs are being incurred.

Another route to inefficient cloud spending is provisioning the wrong kinds of resources, or at least less than optimal resources. For example, engineers may provision a set of large servers to run within a cluster. When workloads increase, autoscaling will add new servers to the cluster. Similarly, when loads drop, servers can be removed from the cluster.

When using a small number of large servers, scaling up and down is fairly coarse grained. A large server may be added to a cluster when the workload marginally exceeds the current capacity. In this case, adding a large server brings more capacity than needed. Had the cluster consisted of a larger number of smaller servers, less expensive servers could be added or removed incrementally until resources meet demand.

Another form of misconfiguration is purchasing VMs not optimized for your workloads. Workloads vary in their characteristics: some are CPU-intensive and others are memory-intensive. Some benefit from IO optimization while others don’t. Some workloads have periodic spikes in load and should run on burstable instances.

One of the advantages of Amazon Web Services is the variety of machine types you can choose from, including burstable, compute-optimized, memory-optimized, and I/O-optimized options. For example, Amazon Web Services offers high memory instances that have more memory per virtual CPU than other types of instances. These are well suited for in-memory databases and caches. Compute-optimized instances are designed for CPU-intensive workloads like video processing or machine learning.

A balanced instance type may be the best fit for workloads that have modest CPU and memory requirements. That same instance, though, may be a poor choice for a high-performance stream processing application that requires little memory but is CPU-intensive. Similarly, a distributed cache is likely to need more memory than CPU resources, so a balanced instance wouldn’t be an optimal choice. Your choice of machine type significantly impacts your costs and efficiency.

Another risk that directly impacts the efficiency of cloud resources is ad hoc purchasing by different business units. These “micro purchases” are easy to make, especially when using IaC. They’re also problematic if done outside established procedures. These micropurchases may not have proper governance in place, and they may not be visible to those who oversee cloud operations at a high level.

In addition, these micropurchases may not follow best practices established by the enterprise—if, for instance, established procedures are bypassed, they may not be detected. They may also fly under the radar of department practices. For example, teams may regularly review infrastructure configurations, but if a deployment isn’t in the catalog of deployments, it may not be reviewed. When this happens, the team is at risk of having the infrastructure drift from what was once an optimal, or at least good, configuration into a costly misconfiguration.

Challenge 3: The Importance of Resource Management

DevOps engineers and infrastructure and operations professionals need a cloud resource management solution that helps them optimize the way they use cloud resources and containers. In addition to the tools that help identify the optimal set of compute resources, engineers will need infrastructure automation tools to streamline provisioning and deployments. Automation not only helps here—it can also help reduce the risk of mistakes when provisioning and deploying.

Densify helps enterprises automate resource management through machine learning. Get a demo of our capabilities to learn more.

Request a Demo