What is Cloud Scaling?

In cloud computing, scaling is the process of adding or removing compute, storage, and network services to meet the demands a workload makes for resources in order to maintain availability and performance as utilization increases. Scaling generally refers to adding or reducing the number of active servers (instances) being leveraged against your workload’s resource demands. Scaling up and scaling out refer to two dimensions across which resources—and therefore, capacity—can be added.

What Factors Impact Cloud Resource Demands?

The demands of your cloud workloads for computational resources are usually determined by:

- The number of incoming requests (front-end traffic)

- The number of jobs in the server queue (back-end, load-based)

- The length of time jobs have waited in the server queue (back-end, time-based)

What is the Difference between Scaling Up & Scaling Out?

Scaling up refers to making an infrastructure component more powerful—larger or faster—so it can handle more load, while scaling out means spreading a load out by adding additional components in parallel.

Scale Up (Vertical Scaling)

Scaling up is the process of resizing a server (or replacing it with another server) to give it supplemental or fewer CPUs, memory, or network capacity.

Benefits of Scaling Up

Vertical scaling minimizes operational overhead because there is only one server to manage. There is no need to distribute the workload and coordinate among multiple servers.

Vertical scaling is best used for applications that are difficult to distribute. For example, when a relational database is distributed, the system must accommodate transactions that can change data across multiple servers. Major relational databases can be configured to run on multiple servers, but it’s often easier to vertically scale.

Vertical Scaling Limitations

Vertical scaling poses challenges to certain workload types:

- There are upper boundaries for the amount of memory and CPU that can allocated to a single instance, and there are connectivity ceilings for each underlying physical host

- Even if an instance has sufficient CPU and memory, some of those resources may sit idle at times, and you will continue pay for those unused resources

Scale Out (Horizontal Scaling)

Instead of resizing an application to a bigger server, scaling out splits the workload across multiple servers that work in parallel.

Benefits of Scaling Out

Applications that can sit within a single machine—like many websites—are well-suited to horizontal scaling because there is little need to coordinate tasks between servers. For example, a retail website might have peak periods, such as around the end-of-year holidays. During those times, additional servers can be easily committed to handle the additional traffic.

Many front-end applications and microservices can leverage horizontal scaling. Horizontally-scaled applications can adjust the number of servers in use according to the workload demand patterns.

Horizontal Scaling Limitations

The main limitation of horizontal scaling is that it often requires the application to be architected with scale out in mind in order to support the distribution of workloads across multiple servers.

What is Cloud Autoscaling?

Autoscaling (sometimes spelled auto scaling or auto-scaling) is the process of automatically increasing or decreasing the computational resources delivered to a cloud workload based on need. The primary benefit of autoscaling, when configured and managed properly, is that your workload gets exactly the cloud computational resources it requires (and no more or less) at any given time. You pay only for the server resources you need, when you need them.- In AWS, the feature is called Auto Scaling groups

- In Google Cloud, the equivalent feature is called instance groups

- Microsoft Azure provides Virtual Machine Scale Sets

Each of these services provides the same core capability to horizontally scale, so we’ll focus on AWS Auto Scaling Groups for simplicity.

What is an AWS Auto Scaling Group (ASG)?

An Auto Scaling group is a set of servers that are configured the same and function as a single resource. This group is sometimes called a cluster. Workloads are distributed across the servers by a load balancer. The load balancer is an endpoint that allows clients to connect to the service without having to know anything about the configuration of the cluster. The client just needs to have the DNS name or IP address of the load balancer.

Choosing Instance Types & Sizes for Auto Scaling Groups

What instance (node) type and size should be used in an Auto Scaling group? That depends on the workload patterns. Instances should have a combination of CPUs and memory that meets the needs of workloads without leaving resources unused.

When creating an Auto Scaling group, you have to specify a number of parameters, including the minimum and maximum number of instances to have in the clusters, and a criterion for triggering adding or removing an instance from the cluster. The choice of parameter values will determine the cost of running the cluster.

The minimum number of instances should be enough to meet the base application load, but not have too much unused capacity. A single instance configured to meet these low-end needs may seem like the optimal choice for the instance type, but that’s not necessarily the case. In addition to thinking about the minimum resources required, you should consider the optimal increment for adding instances.

For example, a t3.xlarge with four virtual CPUs and 16GB of memory may be a good fit for the minimum resources needed in a cluster. When the workload exceeds the thresholds set for adding an instance, such as CPU utilization exceeding 90% for more than three minutes, another instance of the same time is added to the cluster. In this case, another t3.xlarge would be added. If the marginal workload that triggered the addition of a server isn’t enough to utilize all the CPUs and memory, the customer will be paying for unutilized capacity. In this case, a t3.large with two virtual CPUs and 8GB may be a better option. The minimum number of instances can be set to two to meet the base load.

Scaling is Difficult

Manually monitoring network resource utilization is a continuous, time consuming process, and forecasting the need to scale up or scale out is computationally complex.

Within most organizations, these challenges are addressed across a continuum of strategy sophistication:

- Manual Scaling – Your IT Operations organization analyzes utilization and cost metrics periodically and scales workload resources based on peak and average demands. When a utilization threshold is crossed, monitoring tools notify your team—or performance degradation is noticed—and they reactively scale the workload’s resources.

- Autoscaling – IT Operations leverages IaaS autoscaling capabilities to supplement manual instance selection and sizing with built-in infrastructure ability to automatically increase capacity on demand. Availability, cost, or a balance of both drive autoscaling parameters, but significant cost and performance benefits are usually left on the table as this strategy is still fundamentally reactive.

- First-Generation Resource Optimization – Your IT Operations organization leverages an analysis tool to parse utilization metrics from your infrastructure to generate policy-based optimization recommendations. Infrastructure scaling and autoscaling parameters are still orchestrated manually, but are occasionally proactively optimized based on the results of algorithmic analysis.

- Next-Generation Resource Optimization – Scaling and autoscaling parameters are linked to and driven by a cloud infrastructure optimization and management solution that leverages machine intelligence to continuously and proactively select the optimal combination of resources for each of your workloads based on utilization, policy and the cost, performance, and technical capabilities of the entire public cloud service catalog. This continuous optimization (CO) delivers a perfect, ongoing balance of cost and performance.

What are the Risks of Improper Cloud Scaling?

At enterprise scale—when scaling is applied across many workloads—the stakes can be quite high:

- Scaling capacity up or out beyond actual resource utilization results in overspend on unused infrastructure services when load is not at peak

- Scaling capacity up or out based on average utilization creates overspend when demand is low, and puts workload performance at risk when traffic spikes

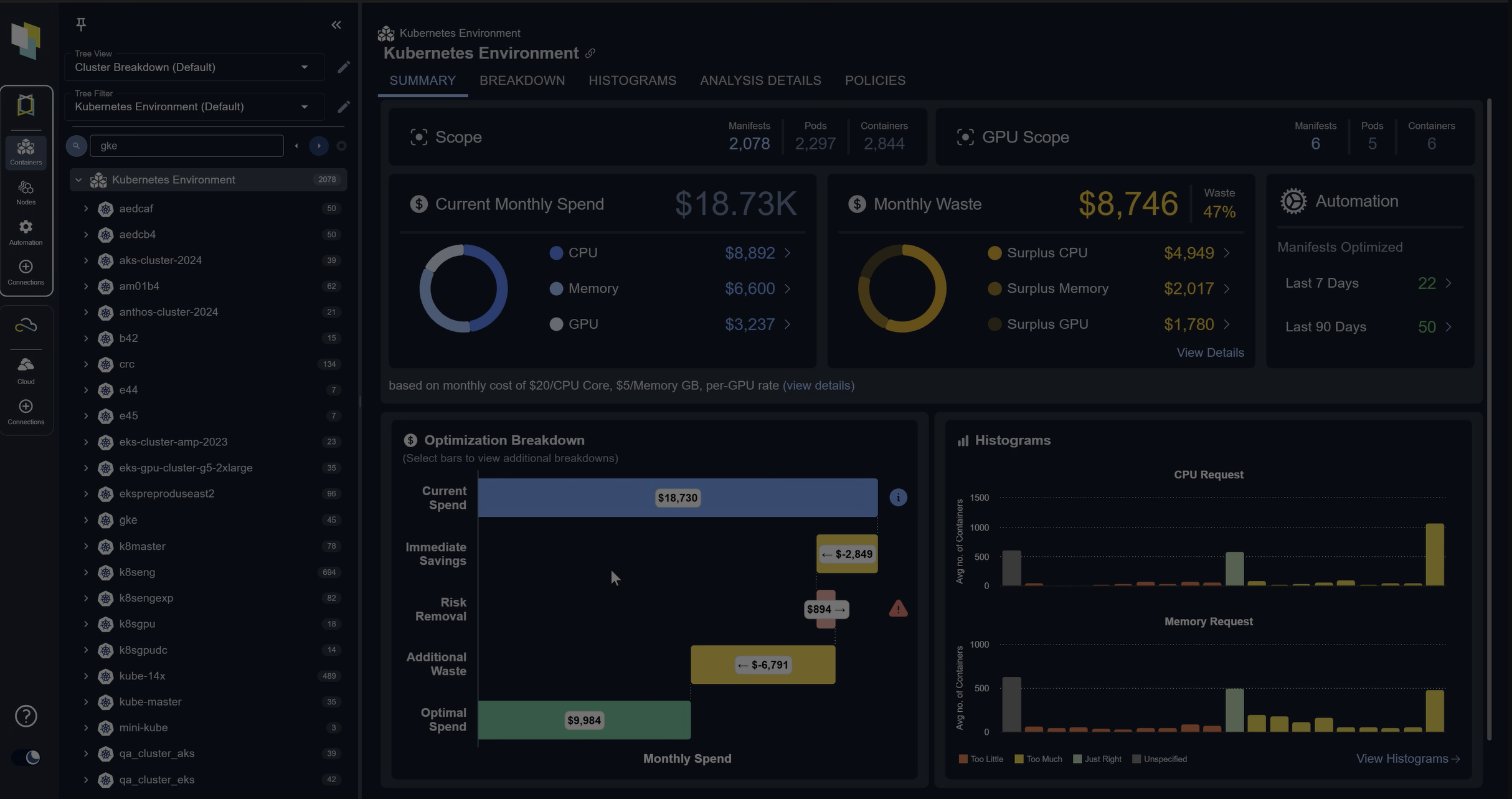

Managing Auto Scaling with Densify

Densify enables managers of large autoscaling infrastructures to optimize performance and spend. Our machine learning analyzes the loads across all your Auto Scaling group nodes and generates recommendations for node type and sizing to better match the entire autoscaling group to the evolving demands of the workload running within. Densify also recommends changes to the minimum and maximum settings for autoscaling to better reflect actual realized demand patterns of your workload.

Get a demo of Densify today and see how your team can leverage our recommendations to optimize and efficiently and prudently manage your autoscaling cloud infrastructure.

Request a Demo