Containers are Efficient—Now, Make Them Even Better

Getting software to run in multiple environments used to be a major hassle. You’d have to write different versions to support each operating system. Even then, you could run into trouble when moving an app if the software environments, network topologies, security policies, or storage weren’t identical.

Containers eliminate these complexities. They pack up an entire runtime environment—the application, plus all the dependencies, libraries, binaries and configuration files—making your applications portable enough to run anywhere. Because containers are very lightweight, you can run lots of them on a single machine, making them highly scalable. And they’re easy to provision and de-provision, so they’re highly elastic.

When it comes to migrating applications, containers are giving older virtual machines a run for their money. Like VMs, containers let you run applications that are separated from the underlying hardware by a layer of abstraction. However, with VMs, that layer of abstraction not only includes the app and its dependencies, but also the entire OS. In contrast, containers running on a server all share the host OS. Because each container doesn’t need its own OS, it can be far smaller and resource-efficient than a VM.

These benefits have driven containers to the heights of popularity. According to the Densify Annual Global Cloud Survey of IT Professionals for 2019, 70 percent of respondents have actively deployed containers or plan to do so in the near future, with over 50 percent planning to run containerized apps in 2019.

Yet, despite the many efficiencies and benefits containers provide over earlier technologies, there are still opportunities to make them even more efficient to further improve resource utilization.

Increasing Container Efficiency

Organizations typically use specialized software, such as Kubernetes, to automate the deployment, scaling, and management of containerized applications. Developers often use a template or manifest tool like HashiCorp Terraform to tell their Kubernetes scheduler how many CPU and memory resources the container is expected to need for a particular workload. During this process, they request a minimum allocation of CPU and memory resources and set an upper allocation limit.

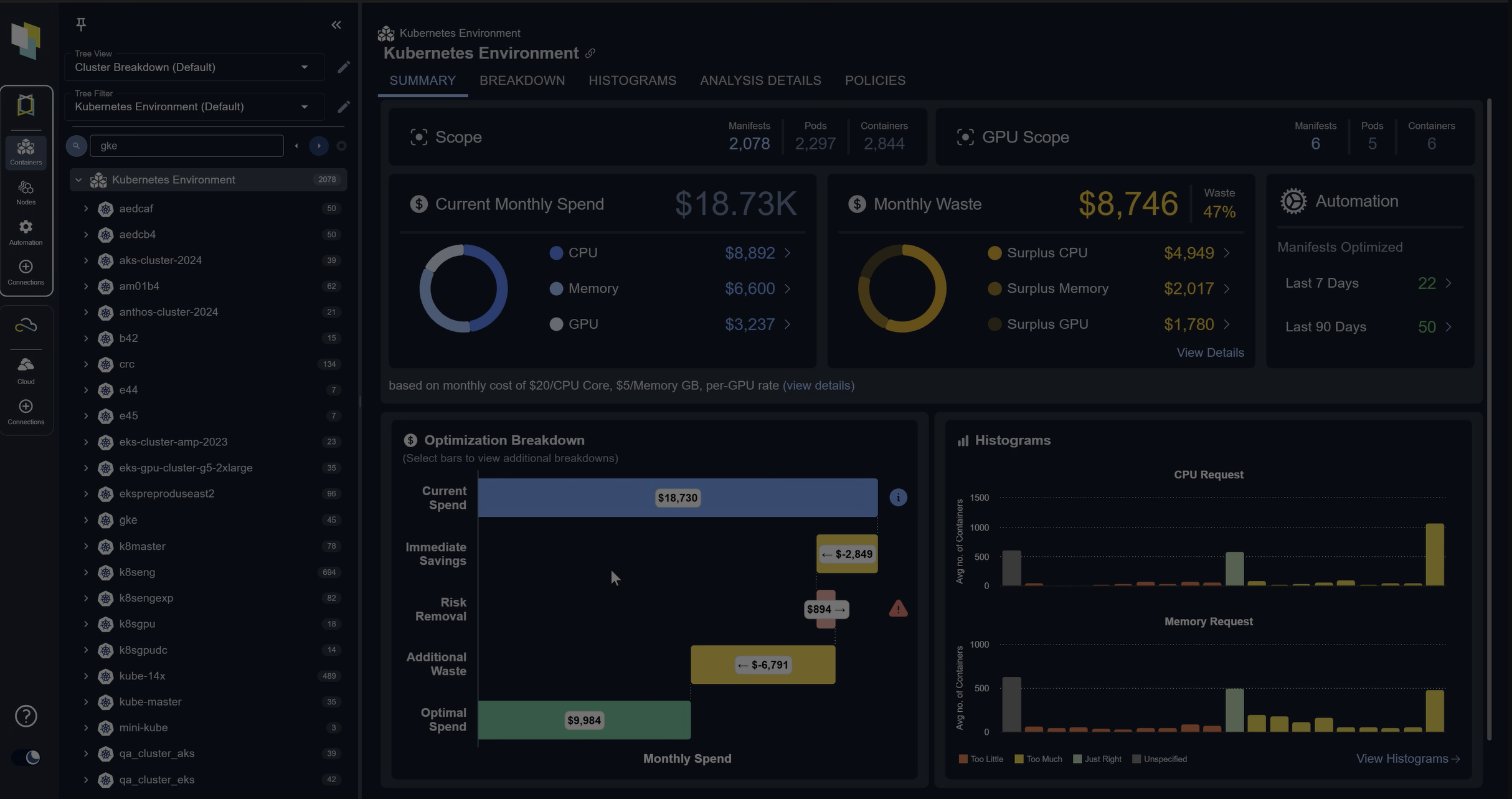

The problem is that developers using these tools don’t have visibility into production workloads to determine the actual container utilization for each one. They have to guess at the levels they should enter into the resource manifest.

As a result, it is very common for developers to allocate far more CPU and memory resources to containers than they actually end up using, incurring considerable costs for these unused resources. Below is an actual example of this exact problem of expectation vs. reality—the container’s CPU utilization is about 20-30 times lower than the CPU resources allocated out.

Conversely, if they don’t allocate enough to accommodate potential peak usage, they face operational risk, where too few resources are available to address workload demands.

The Need for Machine Intelligence

Intelligent tools are now available that monitor real-world, granular utilization data, and provide a learning engine that can analyze utilization continuously to learn actual patterns of activity. The solution then enables you to apply sophisticated policies to generate safe recommendations for sizing containers so that they are neither too large nor too small for the particular workload.

For example, some container workloads may be memory intensive, and running them on a general-purpose instance type may be less efficient than running them in a memory-optimized or burstable instance. The underlying node resource (for example, EC2s running in Auto Scaling groups) type and size must be adjusted to best accommodate the containers running on them. The min and max values for the Auto Scaling groups also need to be optimized based on the workload patterns.

Of course, a large organization might run thousands of containers. Not only do these containers need accurate resource specification (sizing) information, they also need to apply the right size to each one of these containers. This is not achievable at scale with manual efforts. The right solution should address this requirement through automation.

By inserting a line of code to replace hardcoded parameters in the upstream DevOps process, you can automatically point to the analytics engine, allowing you to continually readjust values to optimize containers on the fly.

The Benefits of Optimizing Container Resources

By using a solution that monitors realtime utilization and automates the selection of optimal memory and CPU parameters on the fly, your organization can:

- Guarantee the right resources to improve app performance

- Increase utilization and resource efficiency to ensure you never spend more than you need to

- Incorporate and integrate continuous automation into your DevOps processes

- Size containers without worry, so you can focus on app development

Containers are already resource efficient, but that doesn’t mean you can’t further improve efficiency. With the right intelligent tools, you can specify memory and CPU values based on actual resource utilization, ensuring that you have all the resources you need for your particular application without any unnecessary costs for overprovisioning.

See how your Kubernetes strategy can benefit from automated resource optimization:

Request a Demo of Densify Containers Resource Management