Cloud Computing Cost Savings: Best Practices

Chapter 2.3- Introduction: Guide to FinOps

- Chapter 1.1: How to Get Started with AWS Billing Tools

- Chapter 1.2: Best Practices for AWS Organizations

- Chapter 1.3: AWS Tagging: Best Practices for Cost Allocation

- Chapter 1.4: AWS Organizations: All You Need to Know

- Chapter 2.1: Primer: Cloud Resource Management

- Chapter 2.2: Best Practices: Cloud Optimization Savings

- Chapter 2.3: Cloud Computing Cost Savings: Best Practices

- Chapter 2.4: AWS Compute Optimizer

- Chapter 3.1: AWS Cost Management : Top 7 Challenges

- Chapter 3.2: AWS Cost Saving Steps

- Chapter 3.3: AWS Savings Plans

- Chapter 3.4: Spot Instances: A Complete Guide

- Chapter 4.1: The 4 Fundamental Concepts for Enforcing Cloud Cost Management

- Chapter 4.2: Top 10 FinOps Best Practices

- Chapter 4.3: Drilldown: AWS Budgets vs Cost Explorer

- Chapter 4.4: AWS Cost Categories

You’ve managed to establish a new FinOps practice at your company, implemented the right tools, and generated reports that identify savings opportunities—congratulations! That’s a huge win all by itself. But, as you might have already noticed, realizing those cloud computing cost savings opportunities can be its own challenge. How do you motivate your application and infrastructure teams to act on your FinOps recommendations? In this article, we’ll look at how to effectively deliver on your resource optimization projects in a way that minimizes stakeholder resistance and encourages individual accountability.

There are many factors that can cause your colleagues to delay or resist completing resource optimization project milestones. Those factors can range from needing a certain level of technical skill for execution to perceiving the recommendations as too risky. (Remember, it’s often their job to prevent an application outage—which is partly achieved by ensuring access to ample resources.)

It may seem unfair, but it’s up to you as a FinOps practitioner to prove that the changes enacted by your resource optimization plan are the best course of action. It is also up to you to motivate your application owners, arrange collaboration with your engineering team, and implement a repeatable process.

Our industry is saturated with talks of containerized workloads and serverless architecture dynamically allocating cloud resources. However, your architects and engineers need months ( possibly years) to re-architect your existing applications before you can properly leverage this technology. Even once you put in place, such automations introduce a new level of complexity. And so you must face the reality that savings must come from your application environments as currently architected, in the foreseeable future.

In this article, we recommend an approach for establishing a repeatable process within your organization, and we offer suggestions for focusing your efforts to safely slash your spending.

We have organized our recommendations in four areas:

- Plan your organizational engagements

- Start with the highest savings with the least complexity

- Measure your resources holistically

- Report on efficiency not spending

1. Plan your organizational engagements

Don’t start without a C-level mandate

Many FinOps practitioners have learned the hard way that a project should not be initiated without an explicit directive from C-level management. This means that if your CEO, CFO, CIO, or CTO have not concluded that it is time to rein in cloud spending and centralize its oversight, then it would be best to wait until they reach that conclusion before starting. There’s no universal threshold for cloud resource spending that triggers such a top-down directive as it depends on your company’s size and financial scrutiny—it could happen when resources cost $10,000 per month or $10,000,000.

Engage application owners

Once an official project has been announced by your senior management, then the next step is to engage your application owners. For the purpose of this article, an application owner is the person financially responsible for the profit, loss, and uptime of a specific application (e.g., online retail banking). Don’t wait until you are able to report your cloud spending by cost centers before engaging with application owners, since their help and involvement is required to reach that milestone in the first place.

Involve an infrastructure engineering representative

In a larger organization, the infrastructure engineering staff is separated organizationally from the application business management team, and it’s your responsibility to bring them together to achieve your savings goals. It is common for application owners to have a varying breadth and depth in understanding of key technical concepts, so tailor your engagements based on their technical acumen. In some cases, an application owner may manage their own cloud account; in other cases, they may not even know who is in charge of operating their infrastructure.

Align organizational and individual objectives

Once you have secured a senior executive mandate, and engaged the key stakeholders, you should take the extra step of aligning individual and organizational objectives. Your task is easier if your company has already adopted a standardized management framework such as Management By Objectives (MBO) or Objectives and Key Results (OKR). If so, you should leverage it to assign a measurable MBO or OKR to the individuals tasked to oversee and carry out the recommended resource optimization measures. We advise you to express the measurements in terms of efficiency such as average utilization or cost per unit, as opposed to an absolute dollar value of spending.

Schedule monthly recurring meetings

No matter how you choose to measure the project’s objectives, you should begin by scheduling a monthly recurring meeting with an application owner and a representative from infrastructure engineering. Begin the meetings with a review of last month’s cloud spending. Your first agenda may simply be reviewing your previous month’s aggregated bill (even if you don’t yet have a spending breakdown by application or cost center). We have dedicated a whole section of this FinOps guide to organizing your cloud resources for advanced reporting, which may take weeks or months to properly implement.

Widely distribute your monthly savings reports

Your company’s managers must be reminded of the potential savings to become motivated to help. It is easier to mobilize active engagement when the savings are tangible (down to dollars and cents), and associated with a specific application. As a FinOps practitioner, you should resist the temptation to keep your communications private, or waiting for perfect data before sharing your reports publicly. Label your early reports as preliminary drafts—this way you don’t delay engaging the stakeholders and they don’t overreact—thereby giving you more time to collaboratively improve the quality of the reports over time.

2. Start with the highest cloud computing cost savings with the least complexity

Pick the low hanging fruit

The term “low-hanging fruit” tends to imply, in some way, a sense of obviousness — but how do you actually find these easy wins? You should start by grouping your bill by AWS services (e.g., EC2 compute, S3 storage, RDS databases) and selecting the service that represents the largest percentage of your monthly spending. This approach helps focus your efforts on optimizations that are likely to yield the highest ROI for your time.

We’ve found that the vast majority of application environments still rely on virtual machines or EC2 instances (in AWS terminology) — so, it’s likely that you’ll want to start with the “EC2” and “EC2 Other” (which represents the ancillary EC2 costs) categories, as it’s probably going to represent your largest area of spending.

The following diagram illustrates a few common resource optimization techniques, organized by level of complexity, and the potential for savings.

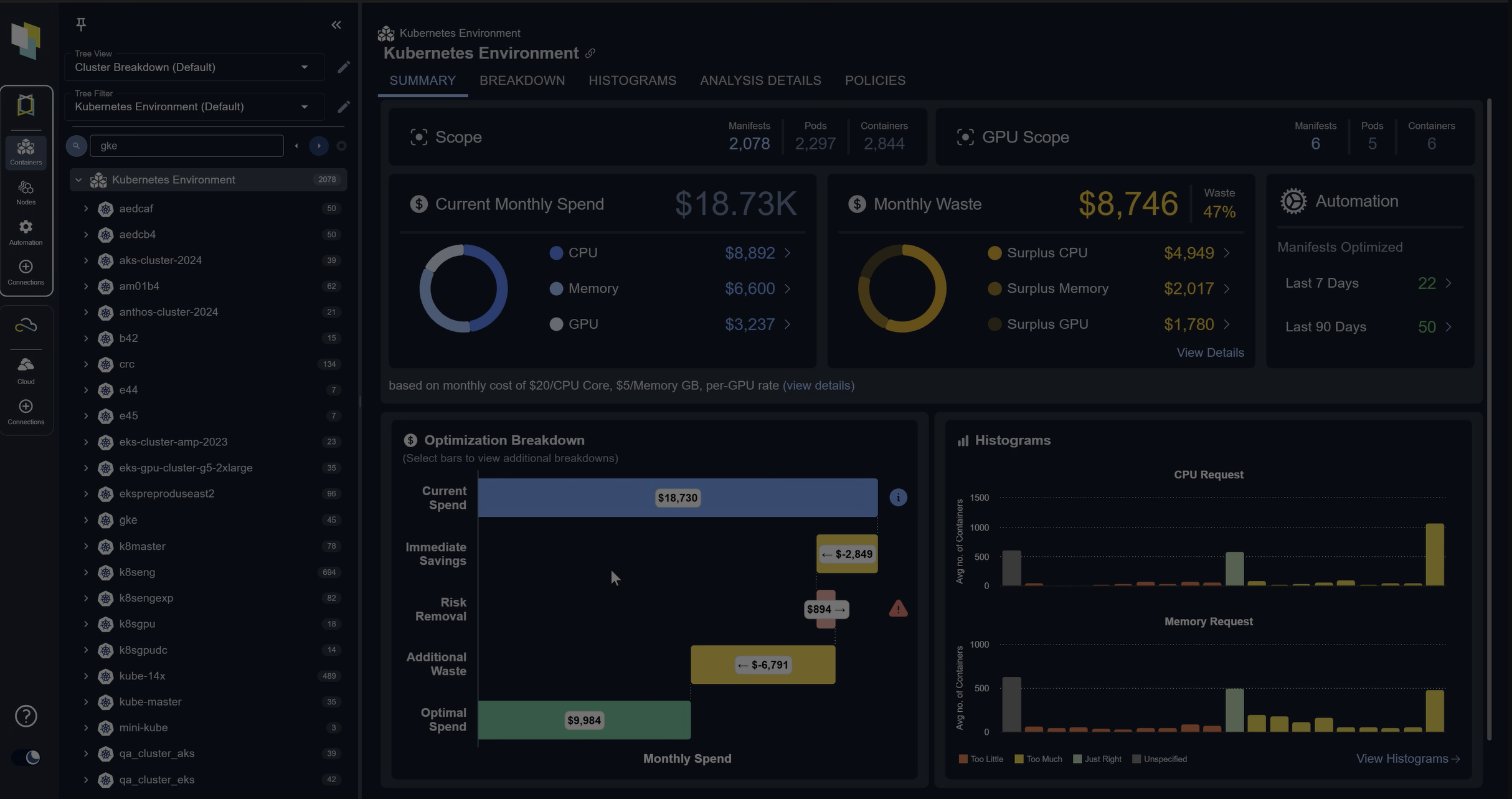

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialModernize EC2 instances

You may have noticed that AWS appends a digit after the first letter as part of the naming convention used for EC2 instances which represents the instance’s generation. For example, a C5.xlarge is a 5th generation of the EC2 instances of type C. Each generation represents a server hardware platform designed with specific chipsets and performance attributes to host virtual machines known as EC2s. Each time a new hardware generation is released, the price of the older generations increase to incentivize users to switch to the new version. We refer to this process as modernization and it’s the simplest method of capturing cloud computing cost savings.

Switch to the right family

Instance types are organized into families. For example, “compute optimized” represents a family of EC2s comprising the C4 and C5 instance types that are designed to best serve CPU-intensive workloads. In contrast, “storage optimized”, as the name suggests, are meant for workloads that require lots of fast storage and include the I3, D2 and D3 instance types. Instances belonging to different families may have the same number of CPUs and the same amount of memory but vary in price. One of the simpler cost savings techniques is to make sure that the right workload is matched with the right instance family to avoid an unnecessary premium payment for unused performance enhancements.

Schedule stopping of EC2 instances when unused

Your organization provisions EC2 instances (VMs) and RDS instances (virtual databases), not only to support your application workload in a production environment, but also for the purpose of development and testing. A great deal of savings can be achieved by stopping those instances when they are not used, like during the night when your team is sleeping. AWS offers a solution for scheduling the starting and stopping of instances. Its implementation would require a few days of time investment by your development team, but it is well worth it. It’s not uncommon to achieve close to 50% savings on your EC2s used for testing and development.

Stop idle EC2 instances

Start by compiling a list of virtual machines and database instances with less than 1% CPU utilization (as measured by 95 percentile) over the past week. Then, distribute the list to application owners and engineering managers, and require their justification by a specific date for keeping those resources running at such low levels of usage.

Stop idle Elastic Block Storage (EBS)

Elastic Block Storage (EBS) is a storage volume attached to an EC2 instance; it is the equivalent of a “networked hard drive” dedicated to a virtual machine. Since engineers don’t typically assign distinguishable “names” or “tags” to EBS volumes, you should rely on its “CreatedBy” AWS tag to identify the user who originally launched it and hold them responsible for terminating it. The long-term solution is to use one of a few automation techniques that exist for deleting the EBS volumes (without manual intervention) once the EC2 is terminated.

Identify idle Elastic Load Balancers (ELB)

Elastic Load Balancers or ELBs distribute incoming traffic to a cluster of virtual machines or containers for the purpose of redundancy and “horizontal” scaling. Even though the savings don’t usually add up to a significant sum, you should still identify orphaned ELBs that don’t have any instances attached to them.

Address overprovisioned EC2, EKS or RDS

In our previous chapter, we explained the complexities of accurately measuring excess cloud capacity which represent the largest area of savings potential. You should consider using not only the tools provided by AWS (which we cover in a separate chapter), but also third-party vendors who specialize in cloud and container resource management. A best-of-breed class of specialized vendors provide this functionality across multiple public and private clouds, leverage a long range of historic data to detect seasonal patterns, apply advanced algorithms and policies to replace human guesswork, and extend their analysis to not only recommend the right sizes but also the right types of resource. We have dedicated a chapter in this guide to advanced techniques used by leading vendors.

Modernize your application architecture

Over time, you will deploy and re-architect more applications to leverage auto-scaling, a containerized microservices architecture, or serverless computing technologies. The idea behind this new paradigm in application design is to break down large monolithic applications into smaller pieces of code which communicate with each other over Application Programming Interfaces (API). These smaller applications are then maintained by separate engineering teams within your company and scaled in real-time based on workload requirements.

In this model, you delegate the management of virtual machines to your cloud service provider to gain velocity in deploying, updating, and scaling your application code. Once the majority of your application is containerized or serverless, your focus in cost savings will shift to optimizing these new types of computing resources, which have their own challenges and complexities.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day Trial3. Measure Your Resources Holistically

Measure beyond CPU

We have dedicated a chapter in this guide to explaining the challenges of measuring all aspects of cloud resources such as CPU, memory, network bandwidth, and input and output operations (IOPS) throughput, as well as the inherent limitations of an EC2 instance in supporting the aggregated IOPS required by all of its storage volumes. You should review that chapter in detail to avoid a scenario where your recommendations for cloud computing cost savings end up creating an application performance bottleneck or an outage.

Measure a long history of data

A week’s worth of cloud resource usage analysis may miss the seasonal spikes that occur at the end of a month or quarter, or on black Friday, for example. We refer to them as “seasonal patterns,” which must be taken into consideration to avoid performance problems and loss of revenue during critical business cycles.

Keep your technology current

It would not be an exaggeration to say that AWS releases new types of EC2 instances on a weekly basis, many of which are based on proprietary chipsets designed by AWS to improve performance. Instances with a given release of hardware and software are referred to as “generations,” and are also refreshed regularly.

As a common practice, AWS increases the pricing of older generations, or reduces the cost of newer generations, to incentivize customers to adopt the newer models. Advanced vendors who specialize in cloud resource management recommend a type, size, and generation based configurations that are updated on a daily basis.

Consider your past purchases

You must avoid the common trap of optimizing your resources without regard for your previous purchases. For example, your company may have committed to 9 months of use for 100 C5.xlarge instances in an Availability Zone (AZ) that went underutilized. In such a scenario, it would be financially advantageous for your company to use the existing unused reserved instances (RI), in lieu of a less expensive instance type and size. The sophisticated third-party providers of resource optimization tooling support configurable policies to factor in your inventory of reservations and savings plan purchases.

Adopt an automation tool

Resource optimization is too complex to be implemented as a manual exercise maintained in a spreadsheet. There are a near-infinite amount of configuration options available, and application workload needs constantly change. Finding the ideal combination for your resources is a feat for advanced algorithms. We recommend customizing your recommendation engine through constraint policies to guide it to your desired outcomes. . For example, your input may be in the form of a knob that you turn to require a desired level of reservation coverage, or a request for a targeted amount of capacity headroom.

4. Report on Your Progress

Record your realized savings

Not all of your identified savings should be implemented. This is because many resources with low utilization are underutilized intentionally, and should not be changed. However, when a savings opportunity is realized, it should be recorded. For example, you would create a list of all EC2s that were terminated based on your recommendation.

In a large enterprise setting, creating such a change logis not as simple as it sounds. In the course of any given day, hundreds or thousands of changes are routinely made to your cloud environments. Only a few of those changes may be directly related to your specific recommendations. Because of this, it is important to operationalize a record of changes made because of your weekly recommendations. Third-party vendors have created collaborative solutions that help in this area.

Report on what your spending would have been without optimization

A common misconception is that cloud cost optimization initiatives must always result in a reduction of monthly cloud spending. Remember, your business aims to grow — so while you are busy removing unused cloud capacity, the growth rate of your business may outpace your savings initiatives. In other words, your cost optimization efforts may only slow the growth of your spending in the long term instead of reversing it. As you record and track the realization of your cost savings efforts, you should report on what the spending would have been without your FinOps initiative.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialReport on efficiency versus spending

The concept of efficiency is powerful once properly defined and widely adopted by an organization. Your EC2s will have a varying amount of average CPU utilization over the course of a month. As you eliminate unused capacity, your average utilization will increase, which in turn increases your efficiency. This measure best reflects the positive impact of your effort and should increase over time, regardless of your monthly spending amount.

Report on a per-unit cost

Suppose that you have an environment with a 1,000 EC2 instances and that the instances have a varying number of CPU cores. Let’s assume that your thousand instances have a total of 2,500 cores in total (an average of 2.5 cores per EC2). Now let’s also suppose that the discount applied to your purchases has increased because you purchased AWS Savings Plans during the same period. Even though some of your newer EC2s may cost more because they are a larger size, your cost per-core would still decrease due to the savings derived from the purchased Savings Plans.

The graphs below illustrate how your per-core efficiency has increased while your per-core cost has decreased even though your overall spending has gone up. This method of reporting is data-driven and helps you truly measure your progress accurately.

Spend less time optimizing Kubernetes Resources. Rely on AI-powered Kubex - an automated Kubernetes optimization platform

Free 30-day TrialLast Thoughts

You will face challenges both in accurately analyzing your application workloads and in motivating your colleagues to act on your recommendations. Success lies in getting the right tools and processes adopted, so that you can streamline your FinOps practice and earn the trust of your organization.

FAQs

What are the best practices for achieving cloud computing savings?

The best practices for cloud computing savings include securing executive support, engaging application owners, starting with high-impact/low-complexity optimizations, measuring resources holistically, and reporting efficiency gains regularly.

How do I find the “low-hanging fruit” for cloud computing savings?

Start by grouping your bill by major services like EC2, RDS, or S3. Focus first on the service with the largest share of spending. Common quick wins include modernizing EC2 instances, rightsizing, and scheduling shutdowns for idle test environments.

Why is stakeholder alignment important for cloud computing savings?

Without executive mandates and collaboration between application owners and infrastructure teams, optimization projects often stall. Aligning organizational and individual objectives ensures accountability and consistent progress.

What role does measurement play in cloud computing savings?

Accurate measurement goes beyond CPU. It includes memory, IOPS, bandwidth, and seasonal workload patterns. Holistic measurement prevents performance bottlenecks and ensures cost reductions don’t compromise reliability.

How should savings be reported in a cloud computing savings initiative?

Reporting should highlight realized savings, efficiency improvements, and per-unit cost reductions rather than just total spend. This approach shows the value of optimization even if overall cloud spending grows with business demand.

Try us

Experience automated K8s, GPU & AI workload resource optimization in action.